Agency Agents Gives Your AI Coding Tool a 147-Person Dream Team

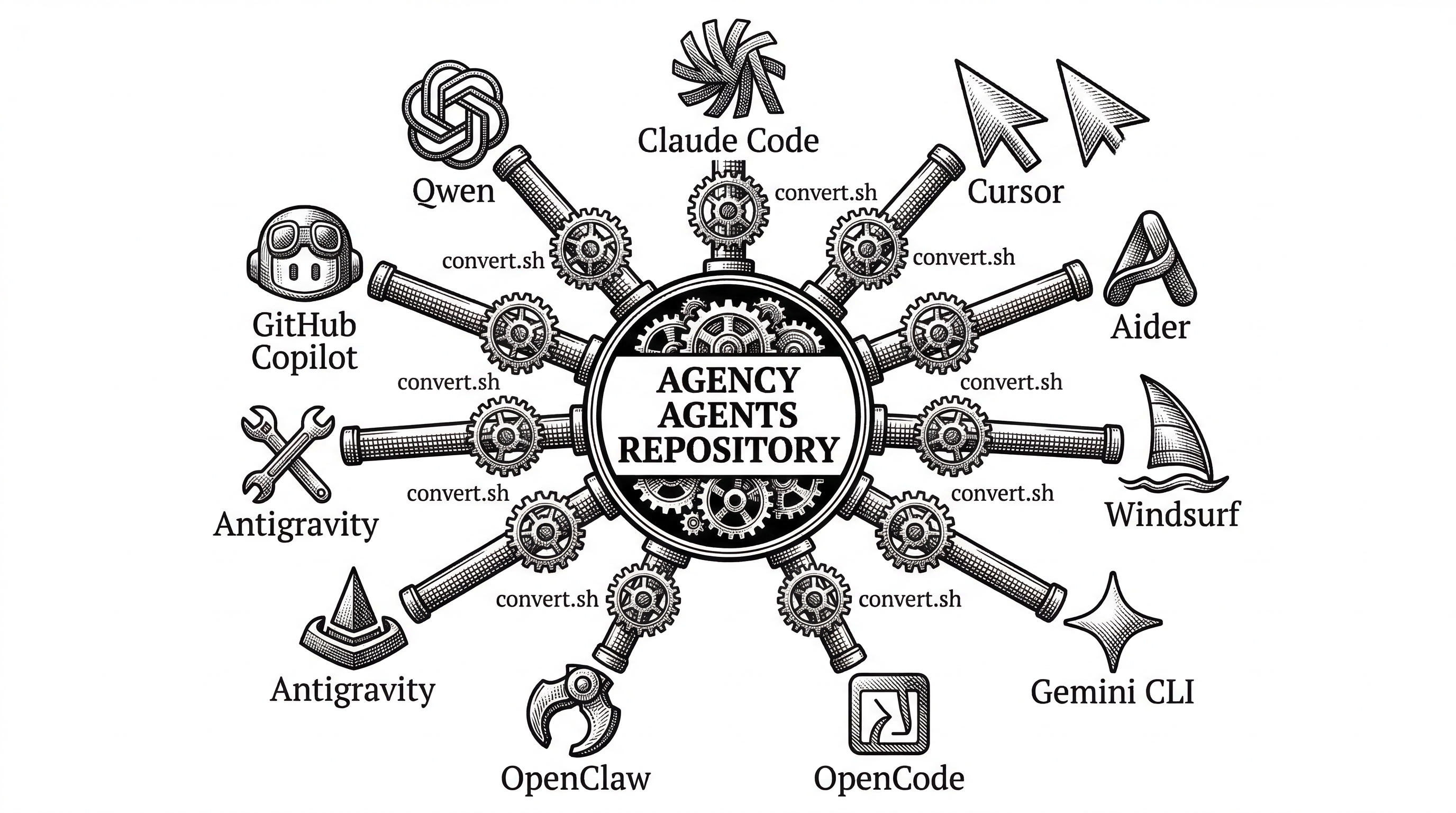

A single open-source repo turns Claude Code, Cursor, Aider, and seven other tools into a coordinated army of specialist agents with real personality, deliverables, and an orchestration layer that actually works.

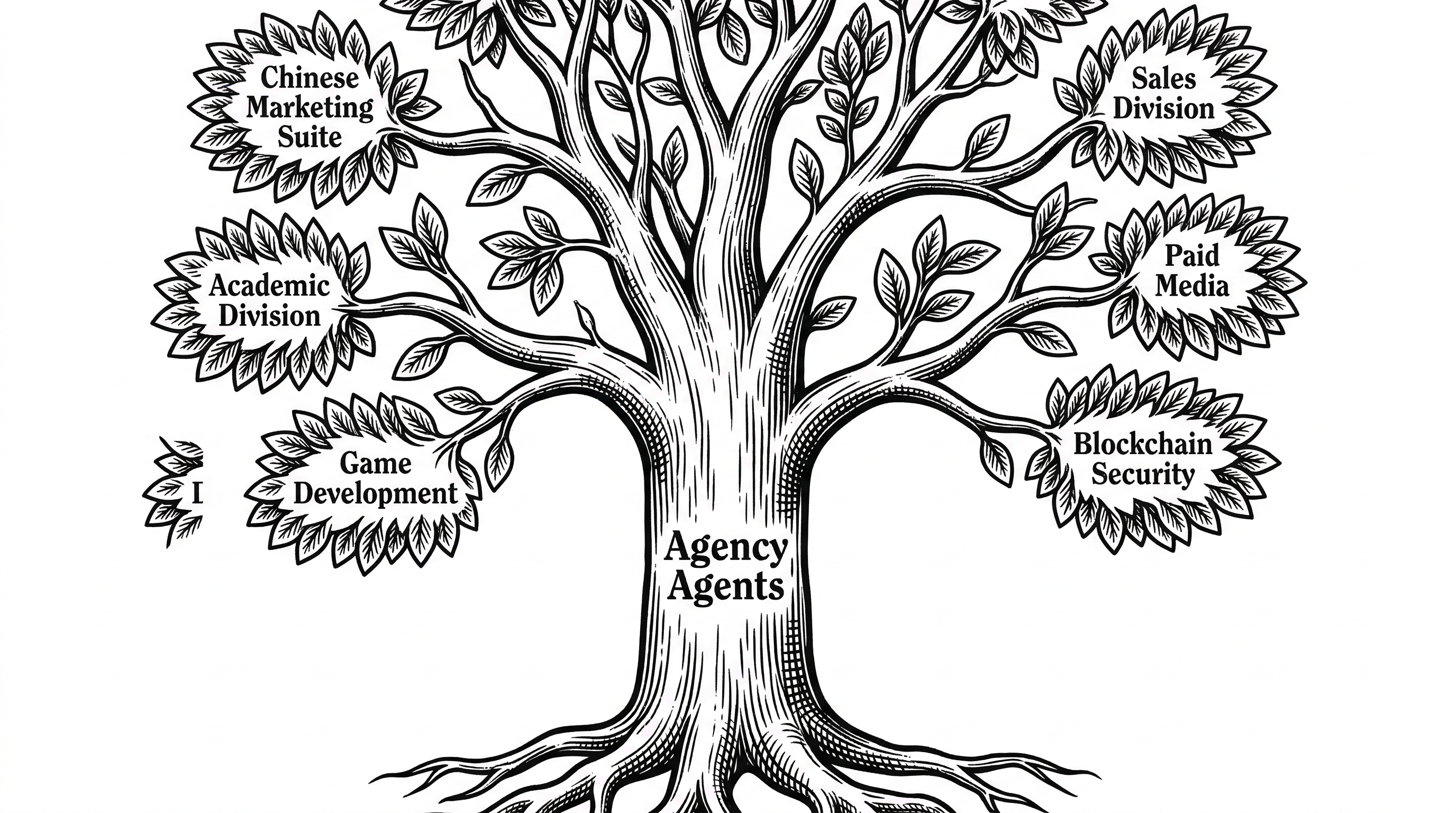

- Agency Agents is a library of 147+ deeply crafted AI agent personas organized into 13 divisions, from engineering and design to sales, game development, and spatial computing.

- Each agent ships with identity traits, critical rules, code deliverables, and success metrics that transform generic AI assistants into domain specialists.

- The NEXUS orchestration layer coordinates agents across a seven-phase pipeline with quality gates, handoff templates, and evidence-based approvals.

- Multi-tool integration scripts convert the entire library for Claude Code, Cursor, Aider, Windsurf, Gemini CLI, OpenClaw, and four other platforms in one command.

Born on Reddit, Built for Production

In October 2025, Michael Sitarzewski posted a handful of AI agent personality files to GitHub. They were not prompt templates. They were not chatbot personas. They were structured operating manuals for turning a generic coding assistant into a specific kind of expert.

The repo description said it all: "A complete AI agency at your fingertips." Within months, a Reddit thread sparked an avalanche of requests. Fifty-plus Redditors asked for new agents in the first twelve hours. Sitarzewski kept building.

By March 2026, The Agency had crossed 52,000 GitHub stars, accumulated nearly 8,000 forks, and grown from a handful of Markdown files into a 13-division operation with its own orchestration doctrine, multi-tool conversion scripts, and a community contributing agents for everything from Solidity smart contracts to Kuaishou short-video marketing.

"Think of it as assembling your dream team, except they're AI specialists who never sleep, never complain, and always deliver."

What Makes an Agent an Agent

Every file in the repo follows a rigid structure. A YAML frontmatter block declares the agent's name, description, emoji, color, and a one-line vibe. Below that sits a Markdown document that reads more like an employee handbook than a prompt.

The typical agent file runs 200 to 400 lines. It opens with an Identity section that defines role, personality, memory context, and experience level. Then comes the Core Mission: a series of specific capabilities the agent must execute, not suggestions but instructions.

Critical Rules follow. These are hard constraints. The Frontend Developer agent, for example, must implement Core Web Vitals optimization from the start, follow WCAG 2.1 AA guidelines, and test with real assistive technologies. These are not optional recommendations. They are enforceable instructions that shape the AI's output every time.

Finally, Technical Deliverables provide production-quality code examples. The Frontend Developer ships with a full React virtual-scroll component. The Backend Architect includes API design patterns with OpenAPI specs. The DevOps Automator delivers Terraform modules and GitHub Actions workflows.

The 13 Divisions

The original release covered nine divisions: Engineering, Design, Marketing, Product, Project Management, Testing, Support, Spatial Computing, and Specialized. Community contributions have since added Sales, Paid Media, Game Development, and Academic, bringing the total to thirteen.

The Engineering division alone has 23 agents. That includes not just the expected roles (frontend, backend, mobile, DevOps) but also niche specialists: an Embedded Firmware Engineer for ESP32 and STM32 bare-metal work, a Solidity Smart Contract Engineer for gas-optimized DeFi contracts, and a WeChat Mini Program Developer for the Chinese app ecosystem.

Marketing is the largest division at 27 agents. It covers Western platforms (SEO, Twitter, Instagram, TikTok, LinkedIn, Reddit) and Chinese platforms with equal depth. There are dedicated agents for Xiaohongshu, Zhihu, Bilibili, Douyin, Kuaishou, Weibo, and WeChat. A Baidu SEO Specialist handles China-specific search optimization. A Cross-Border E-Commerce Specialist manages Shopee, Lazada, and Amazon storefronts simultaneously.

The Game Development division arrived via community PR and already includes agents for Unity, Unreal Engine, Godot, Roblox Studio, Blender, game audio, narrative design, level design, and technical art. Spatial Computing covers visionOS, WebXR, and even cockpit interaction design for vehicles.

Personality as a Technical Feature

The agents are not generic. Each one has a defined communication style, personality traits, and a perspective shaped by its domain experience. The Whimsy Injector talks differently than the Security Engineer. The Reddit Community Builder writes with different instincts than the LinkedIn Content Creator.

This is not cosmetic. When an AI assistant adopts the Frontend Developer persona, it defaults to accessibility-first thinking, performance budgets, and component-driven architecture. When it becomes the Reality Checker, it defaults to skepticism, demands evidence for every claim, and refuses to mark work as complete without proof.

The Reality Checker deserves special mention. Its default posture is NEEDS-WORK. It rates basic implementations harshly and requires screenshots, test results, and performance benchmarks before issuing approval. The NEXUS strategy document calls out "fantasy approvals" as a primary failure mode in multi-agent systems. The Reality Checker exists specifically to prevent them.

"Multi-agent projects fail at handoff boundaries 73% of the time when agents lack structured coordination protocols."

NEXUS: The Orchestration Layer

Individual agents are powerful. But The Agency's real ambition lives in its strategy directory. NEXUS (Network of EXperts, Unified in Strategy) is an 800-line operational doctrine that turns the entire agent collection into a coordinated pipeline.

The system defines seven phases: Discovery, Strategy, Foundation, Build, Harden, Launch, and Operate. Each phase specifies which agents activate, what they produce, who receives their output, and what quality gate must pass before the pipeline advances.

Phase 3 (Build) introduces the Dev-QA loop. Every implementation task cycles through a developer agent and an Evidence Collector. Failed tasks loop back with specific feedback. A maximum of three retries prevents infinite spirals. The executive brief claims this pattern catches 95% of defects before integration and reduces hardening time by 50%.

NEXUS ships with three deployment modes. Full mode activates all agents across 12 to 24 weeks for a complete product lifecycle. Sprint mode uses 15 to 25 agents over 2 to 6 weeks for features and MVPs. Micro mode deploys 5 to 10 agents for 1 to 5 days to handle bug fixes, campaigns, or audits.

The strategy directory includes playbooks for each phase, handoff templates for seven common scenarios (QA pass/fail, escalation, phase gate, sprint, incident, and more), activation prompt templates for every agent, and four pre-built runbooks: Startup MVP, Enterprise Feature, Marketing Campaign, and Incident Response.

Multi-Tool Integration

The Agency was originally designed for Claude Code. Agents work natively by copying Markdown files into the Claude agents directory. But the repo now supports ten different tools through a conversion and installation system.

Running ./scripts/convert.sh generates integration files for Cursor (.mdc rule files), Aider (CONVENTIONS.md), Windsurf (.windsurfrules), Gemini CLI (skill files plus extension manifest), OpenCode (agent .md files), OpenClaw (SOUL.md workspaces), Antigravity (skill files), GitHub Copilot, and Qwen Code.

# Generate integration files for all supported tools

./scripts/convert.sh

# Install interactively (auto-detects installed tools)

./scripts/install.sh

# Or target a specific tool

./scripts/install.sh --tool cursor

./scripts/install.sh --tool aiderThe install script auto-detects which tools are present on the system and copies the right files to the right locations. For home-scoped tools like Claude Code and Antigravity, it installs globally. For project-scoped tools like Cursor and Aider, it drops files into the current working directory.

The convert script handles the hard work of reformatting structured Markdown into each tool's native format. Cursor expects .mdc files with specific metadata headers. Aider wants a single CONVENTIONS.md. OpenClaw needs SOUL.md, AGENTS.md, and IDENTITY.md files organized into workspace directories. The script runs with a tqdm-style progress bar, supports parallel execution, and handles all 13 division directories automatically.

How It Compares to Agent Frameworks

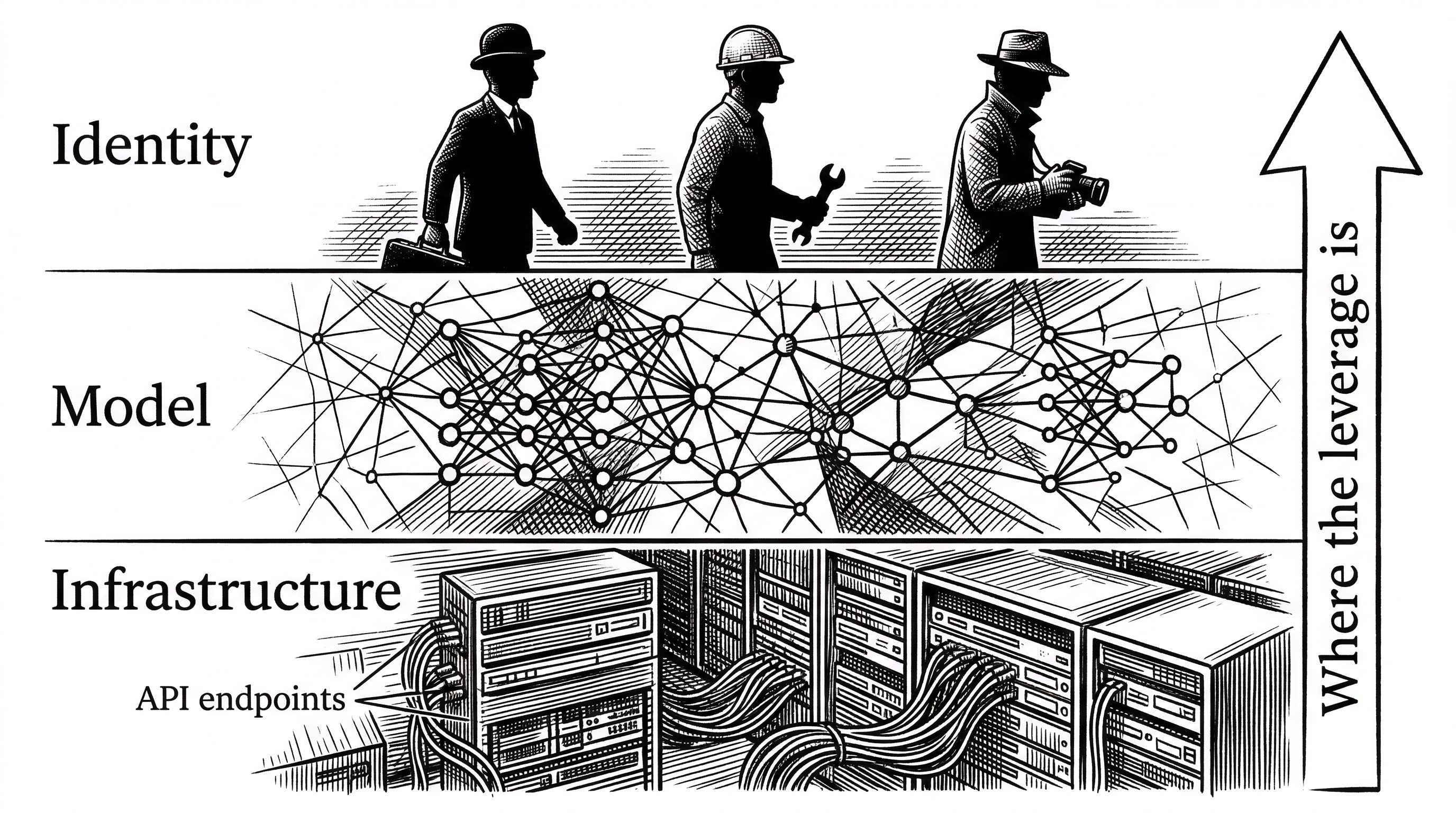

Agency Agents is not an agent framework. It does not compete with LangChain, CrewAI, AutoGen, or MetaGPT. Those projects provide runtime infrastructure: state machines, message passing, tool orchestration, and execution environments. The Agency provides the identity layer that sits on top.

Think of it this way. CrewAI gives you a stage and a script. The Agency gives you the actors with fully developed characters. The two are complementary. You could use a CrewAI pipeline where each agent loads its personality and rules from an Agency Agents file.

| Aspect | Agency Agents | CrewAI / LangGraph | Generic Prompt Libraries |

|---|---|---|---|

| What it provides | Identity, rules, deliverables, metrics per agent | Runtime, state mgmt, tool orchestration | Short system prompts |

| Agent depth | 200-400 lines per agent with code examples | Varies (typically minimal persona) | 1-10 lines per prompt |

| Orchestration | NEXUS doctrine (copy-paste prompts) | Programmatic pipelines | None |

| Tool support | 10 coding assistants natively | Python/TypeScript SDK | Tool-agnostic (raw text) |

| Specialization | 147 agents across 13 domains | User-defined roles | Broad, generic personas |

| Quality gates | Evidence-based, Reality Checker enforced | Programmable checkpoints | None |

The gap Agency Agents fills is specificity. Framework-based agents typically get a one-sentence role description: "You are a senior Python developer." Agency Agents gives that developer a 300-line operating manual with code patterns, testing requirements, performance budgets, and a defined communication style. The difference in output quality is measurable.

The Community Effect

The contributing guide is detailed and opinionated. New agents must follow a strict template. They need YAML frontmatter, identity and memory sections, core mission with at least three capability areas, critical rules with hard constraints, technical deliverables with real code, and success metrics with quantifiable targets.

The community has responded. Over 50 contributors have submitted agents spanning industries and cultures. The Chinese digital marketing suite alone covers eight platforms (Xiaohongshu, Zhihu, Bilibili, Douyin, Kuaishou, Weibo, WeChat, Baidu) and includes a Cross-Border E-Commerce Specialist and a Livestream Commerce Coach. The Academic division arrived as a single PR adding five storytelling-focused agents: Anthropologist, Geographer, Historian, Narratologist, and Psychologist.

The lint script (scripts/lint-agents.sh) enforces structural consistency across all submissions. Every agent must have the required sections, proper frontmatter, and valid Markdown formatting. Pull requests that break the template get caught before review.

Who Is Michael Sitarzewski?

Sitarzewski is VP of Innovation and Technology at Tandem Theory, a Dallas-based agency that works with consumer brands like Rent-A-Center, CAVA, and Purina. He has been building internet tools since 1993, when he launched an apartment search site called Apartments On-Demand.

His career arc includes a podcast discovery platform, a Techstars Cloud stint in 2012, and a decade running a geolocation-to-direct-mail business. The Agency reflects that breadth. It is not a developer-only tool. It covers marketing, sales, design, project management, and specialized roles because Sitarzewski has worked with all of those functions in agency life.

"Born from a Reddit thread and months of iteration, The Agency is a growing collection of meticulously crafted AI agent personalities."

The Deeper Bet

The Agency is betting that the identity layer matters more than most people think. The conventional wisdom in AI tooling focuses on infrastructure: better models, faster inference, smarter routing, cheaper tokens. Agent identity is treated as an afterthought.

But anyone who has used a coding assistant extensively knows that the system prompt changes everything. A well-crafted persona does not just change the tone. It changes what the model considers, what it prioritizes, what it refuses to skip, and how it structures its output. The Agency takes that observation and scales it to organizational proportions.

The NEXUS orchestration layer pushes further. It argues that you can run an entire product development lifecycle through coordinated AI agents, with quality gates that prevent the kind of sloppy handoffs that plague real teams. Whether that works at scale remains an open question. But the 52,000 developers who have starred the repo are clearly willing to find out.

What to Watch

The repo is actively maintained. Commits land multiple times per week, many co-authored with Claude. The roadmap includes deeper integrations, more specialized agents, and potential tooling for measuring agent effectiveness.

Three things will determine whether The Agency becomes the standard identity library for AI coding tools or fades into another starred-but-unused repo. First, whether the NEXUS orchestration pattern translates into measurable productivity gains. Second, whether the community can maintain quality as agent count grows beyond 200. Third, whether tool vendors build native support for structured agent personas instead of treating system prompts as a textarea.

For now, it is MIT licensed, free to use, and one cp command away from transforming your AI assistant into 147 different kinds of expert. That is a compelling pitch.