AstrBot: The 25k-Star Chatbot Router That Connects Every IM to Every LLM

A graduate student's side project grew into a production-grade agentic chatbot bus. AstrBot wires 16 messaging platforms to 28 model providers, wraps everything in a 9-stage pipeline, and ships 1,000+ community plugins. Here is how it works.

- AstrBot is an open-source Python framework that unifies 16 IM platforms and 28 LLM/TTS/STT providers behind a single event pipeline, letting developers deploy AI chatbots without rewriting per-platform glue code.

- Its 9-stage message pipeline (wake check through response dispatch) with a plugin system called "Stars" gives operators fine-grained control over rate limits, content safety, persona, and tool execution.

- First-class MCP (Model Context Protocol) support and a Docker-based Agent Sandbox let AstrBot agents execute code, browse the web, and call external tools in isolated containers.

- Born from a Chinese graduate student's QQ bot experiment in late 2022, AstrBot reached 25k+ stars and 1,700+ forks by building the connective tissue that neither LLM providers nor messaging platforms wanted to maintain.

From QQ Bot to Universal Agent Bus

In December 2022, a Beijing University of Posts and Telecommunications (BUPT) student going by Soulter published a small Python project called QQChannelChatGPT. The goal was straightforward: let ChatGPT respond to messages on QQ, China's dominant messaging platform with over 500 million monthly active users.

The project hit a nerve. Demand poured in for Telegram support, then WeChat Work, then Feishu. Rather than write bespoke adapters, Soulter refactored the codebase into a platform-agnostic message bus. QQChannelChatGPT became AstrBot.

Three years later, the project supports 16 platforms officially (with community adapters for Matrix, KOOK, and VoceChat), 28 model service integrations spanning LLMs, TTS, STT, and embeddings, and a marketplace of over 1,000 community plugins.

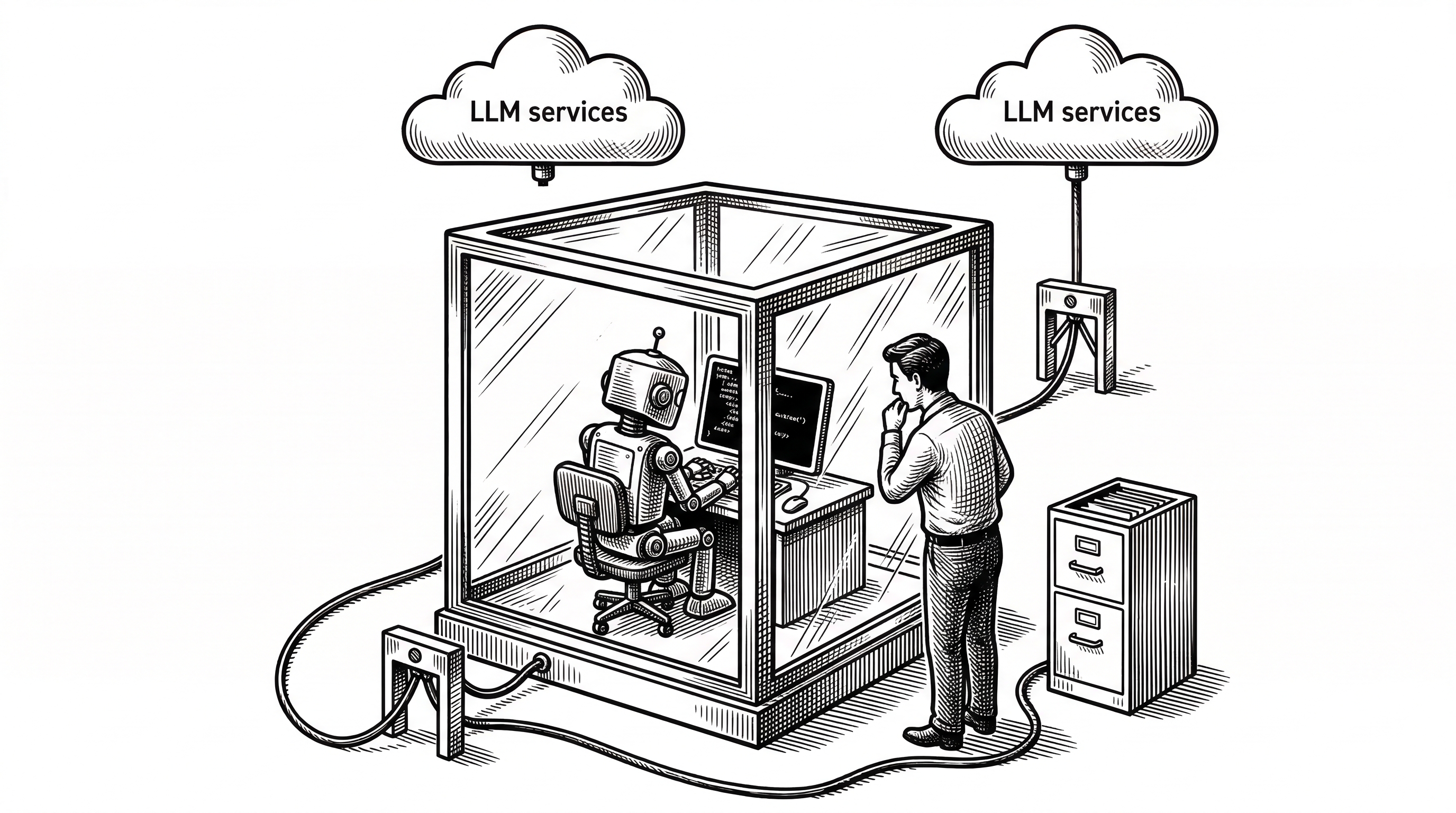

The Architecture: Platforms, Providers, Pipeline

AstrBot's architecture rests on three pillars: platform adapters for messaging, provider sources for AI services, and a pipeline that connects them. Each pillar is independently extensible. You can add a new chat platform without touching any model code, or swap LLM providers without rebuilding adapters.

Platform Adapters

Each messaging platform gets its own adapter in astrbot/core/platform/sources/. At last count, the directory holds 16 official adapters: QQ (via both the official API and the OneBot v11 protocol), Telegram, WeChat Work, Feishu (Lark), DingTalk, Slack, Discord, LINE, WeChat Official Accounts, Satori, Misskey, and more.

Adapters normalize inbound messages into a shared AstrMessageEvent object. That object carries the text, any media attachments, the sender's identity, and platform metadata. Once normalized, the message enters the pipeline. The adapter never knows which model will handle it.

Provider Sources

The provider layer lives in astrbot/core/provider/sources/ with 28 source files covering OpenAI, Anthropic, Google Gemini, DeepSeek, Zhipu, Groq, xAI, OpenRouter, and many more. Beyond raw LLM chat completion, providers also handle text-to-speech (Edge TTS, Azure TTS, FishAudio, GPT-SoVITS, Minimax), speech-to-text (Whisper, SenseVoice), embeddings (OpenAI, Gemini), and reranking (Bailian, vLLM, Xinference).

This is not just about swapping GPT-4 for Claude. AstrBot treats the entire multimodal stack as pluggable. A single deployment can route text to DeepSeek, voice synthesis to Edge TTS, document parsing to Markitdown, and embeddings to Gemini.

"AstrBot is an open-source all-in-one Agent chatbot platform that integrates with mainstream instant messaging apps. It provides reliable and scalable conversational AI infrastructure for individuals, developers, and teams."

The 9-Stage Pipeline

Every inbound message passes through a fixed sequence of nine processing stages. The pipeline is defined in astrbot/core/pipeline/stage_order.py and each stage has its own subdirectory. This is not a loose chain of middleware. It is an explicit, ordered, and inspectable contract.

WakingCheckStage decides whether the bot should respond at all. In group chats, AstrBot might require an @-mention or a wake word before activating. This prevents the bot from responding to every single message in a busy group.

WhitelistCheckStage enforces access control. Operators can restrict the bot to specific group chats, specific users, or both. This keeps the bot from leaking into unauthorized channels.

SessionStatusCheckStage verifies whether the session is globally enabled. An operator can pause the bot without tearing down the deployment.

RateLimitStage throttles requests per session. This is essential for public-facing bots that could otherwise be flooded with requests.

ContentSafetyCheckStage screens inbound content before it ever reaches a model. This is where profanity filters, prompt injection detectors, and custom safety rules live.

PreProcessStage handles normalization and enrichment. Context compression, message history retrieval, and persona injection happen here.

ProcessStage is the heart. It routes the message either to a Star (plugin) that claims the command, or to the configured LLM for a conversational response. This is where agent tool calls, MCP interactions, and knowledge base lookups occur.

ResultDecorateStage post-processes the response. It can add reply prefixes, convert text to images, render markdown, or synthesize speech.

RespondStage dispatches the final message back through the platform adapter to the user.

Stars: The Plugin System

AstrBot calls its plugins "Stars." A Star is a Python package that registers handlers for specific commands, events, or patterns. The marketplace hosts over 1,000 of them, installable with a single click from the WebUI.

Stars operate at the ProcessStage. When a message arrives, the pipeline checks whether any registered Star claims the input. If a Star matches (via command prefix, regex pattern, or event type), it handles the message directly. If no Star matches, the message falls through to the LLM provider.

This design means AstrBot is not just a chatbot. It is an extensible automation platform. Stars can integrate with external APIs, trigger workflows, manage databases, send scheduled messages, and more. The plugin template repository (Soulter/helloworld) gives developers a minimal starting point.

Agent Capabilities: MCP, Sandbox, and Tools

AstrBot is not limited to simple request-response conversations. Its agent layer, built in astrbot/core/agent/, supports full agentic workflows: multi-step reasoning, tool calling, handoffs between sub-agents, and the Model Context Protocol (MCP) for dynamic tool discovery.

MCP Integration

The mcp_client.py module connects AstrBot to MCP-compatible tool servers. This means the bot can discover and invoke external tools at runtime without hardcoded integrations. An operator can point AstrBot at any MCP server and the agent gains access to whatever tools that server exposes.

Agent Sandbox

When agents need to execute code, AstrBot provides a Docker-based sandbox system (codenamed "Shipyard"). The sandbox isolates AI-generated code in disposable containers, preventing rogue scripts from touching the host system. Session pooling via a max_sessions parameter lets operators control concurrency.

The newer "Shipyard Neo" implementation adds profile-based environments (like python-default with common libraries pre-installed) and supports Skills self-iteration, where the agent can refine its own tool usage across multiple turns.

Knowledge Base

The astrbot/core/knowledge_base/ module implements a full RAG (Retrieval-Augmented Generation) pipeline. It handles document parsing (PDF, DOCX, XLSX via Markitdown), text chunking, embedding generation, FAISS-based vector retrieval, and BM25 keyword search with Jieba tokenization for Chinese text. Reranking support via Bailian, vLLM, or Xinference refines results before they reach the LLM.

Deployment: Five Paths to Running AstrBot

AstrBot offers an unusually wide range of deployment options. The fastest path uses uv, the Rust-based Python package manager:

uv tool install astrbot

astrbot init

astrbot runThree commands, and you have a running bot with a WebUI dashboard. Docker and Docker Compose are the recommended production paths. Kubernetes manifests live in the k8s/ directory for larger deployments.

For users who want zero terminal interaction, there is a desktop Electron app (AstrBot-desktop), a community launcher (AstrBot Launcher), and one-click cloud deployment on RainYun. Chinese server panel users get dedicated recipes for BT Panel, 1Panel, and CasaOS. There is even an AUR package for Arch Linux.

This breadth of deployment options reflects AstrBot's user base. It spans hobbyists running a personal QQ bot on a Raspberry Pi through enterprises deploying multi-platform customer service agents on Kubernetes.

The WebUI and ChatUI

AstrBot ships a Vue-based dashboard (the dashboard/ directory contains 1.5MB of Vue and TypeScript) that lets operators configure platform adapters, manage LLM providers, install plugins, monitor conversations, and adjust personas without touching config files.

A separate ChatUI provides a web-based conversation interface with built-in agent sandbox integration and web search. This means AstrBot can serve as its own frontend, not just a backend for messaging platforms.

How AstrBot Compares

The chatbot framework landscape is crowded. Here is how AstrBot fits relative to the most common alternatives.

| Aspect | AstrBot | Dify | Botpress | Rasa |

|---|---|---|---|---|

| Primary Focus | Multi-platform IM agent bus | Visual AI workflow builder | Visual chatbot builder | ML-based conversational AI |

| IM Integrations | 16 official + community | Limited (via API) | Several channels | Custom only |

| LLM Providers | 28 sources (LLM + TTS + STT) | Many via config | OpenAI-centric | Self-hosted NLU |

| Plugin Ecosystem | 1,000+ marketplace | Tool/workflow templates | Integration hub | Custom actions |

| Agent Sandbox | Docker-based (Shipyard) | Sandboxed code execution | None | None |

| MCP Support | Native client | Emerging | No | No |

| Knowledge Base | Built-in RAG + BM25 | Built-in RAG | Built-in RAG | External only |

| Primary Language | Python | Python + TypeScript | TypeScript | Python |

| License | AGPL-3.0 | Apache-2.0 (open core) | MIT | Apache-2.0 |

| Target User | Developers, teams, hobbyists | No-code/low-code builders | Business users | ML engineers |

AstrBot's differentiator is not AI sophistication. Dify has a better workflow builder. Rasa has deeper NLU. What AstrBot does better than anyone is the connective tissue: wiring real messaging platforms to real AI services with minimal glue code.

The Chinese IM Advantage

A major reason AstrBot thrives is its deep integration with Chinese messaging platforms. QQ, WeChat Work, Feishu, DingTalk, and WeChat Official Accounts are not afterthoughts. They are first-class citizens with officially maintained adapters.

Most Western chatbot frameworks barely acknowledge these platforms exist. If you want a ChatGPT-powered bot on QQ or a customer service agent on DingTalk, your options are limited. AstrBot fills that gap cleanly.

The project's README ships in six languages (English, Simplified Chinese, Traditional Chinese, Japanese, French, Russian), and the i18n infrastructure runs deep. But the community gravity is unmistakably Chinese. Most of the 1,000+ plugins, the documentation site at astrbot.app, and the contributor base originate from Chinese developers.

"Whether you're building a personal AI companion, intelligent customer service, automation assistant, or enterprise knowledge base, AstrBot enables you to quickly build production-ready AI applications within your IM platform workflows."

Persona System and Emotional Companionship

AstrBot's persona system goes beyond simple system prompts. The persona_mgr.py module manages character definitions, personality traits, and behavioral rules that persist across conversations. Operators can configure multiple personas and switch between them per-platform or per-group.

This enables a use case that dominates AstrBot's community: role-playing and emotional companionship bots. The README highlights it as the first feature column. In Chinese internet culture, AI companionship bots are enormously popular, and AstrBot provides the infrastructure to deploy them across QQ groups and private chats at scale.

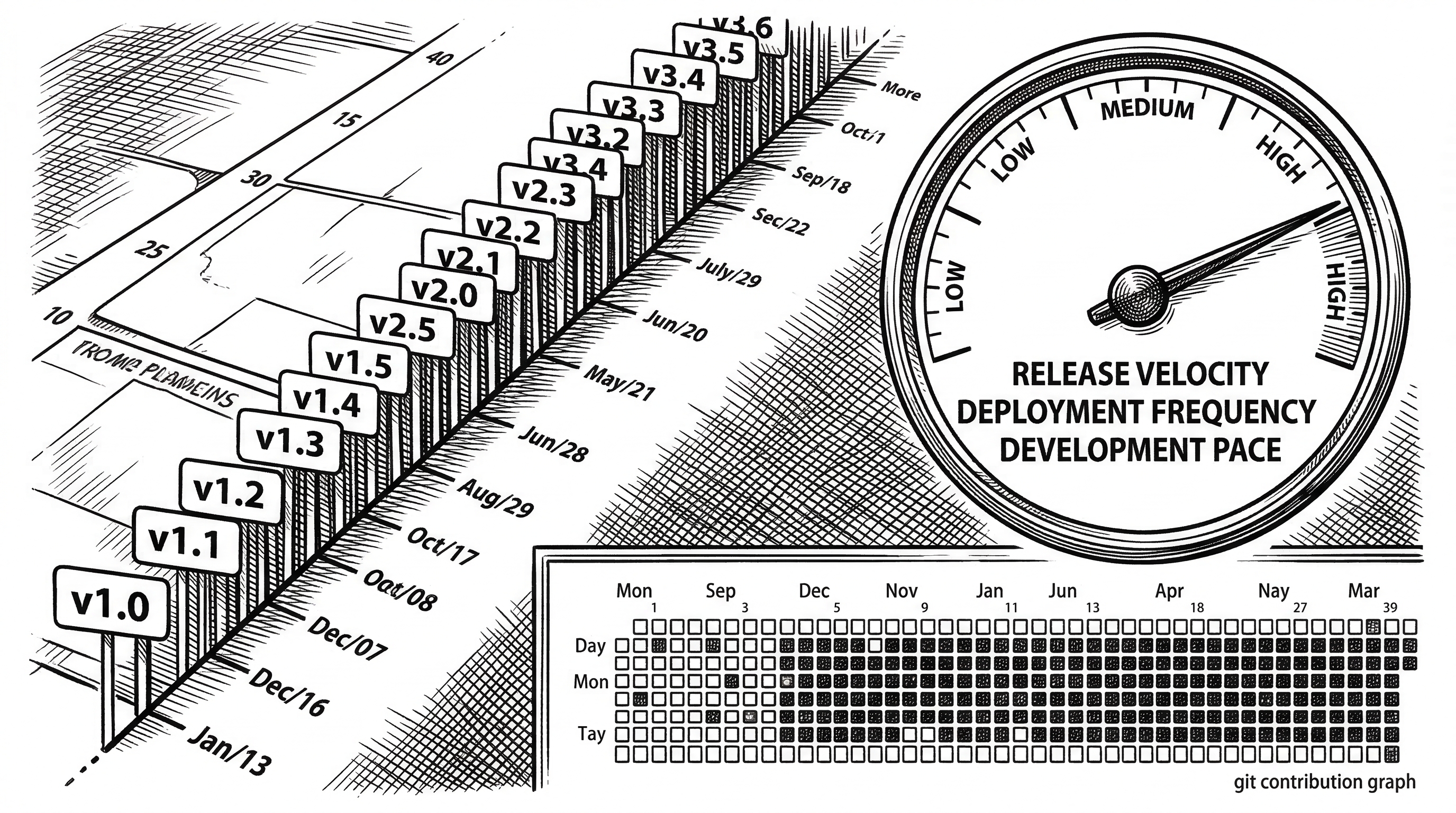

Under the Hood: Code Quality and Velocity

The codebase is overwhelmingly Python (4.1 million bytes), with the Vue dashboard adding another 1.5 million. The project uses pyproject.toml for packaging and requires Python 3.12+. Dependencies include heavy hitters like openai, anthropic, google-genai, faiss-cpu, mcp, sqlalchemy, quart, and platform-specific SDKs for QQ, Telegram, Discord, DingTalk, Feishu, Slack, and WeChat.

The release cadence is aggressive. Version 4.20.1 shipped on March 16, 2026, with 4.20.0 four days before that and 4.19.5 two days before that. The primary contributor (Soulter) has over 3,000 commits. Raven95676 follows with 210. This is a project with a strong BDFL (Benevolent Dictator For Life) and a growing contributor base.

The AGPL-3.0 license means any modifications to AstrBot deployed as a service must be open-sourced. This is a deliberate choice that keeps the ecosystem open while allowing commercial use within the license terms.

What to Watch

AstrBot's roadmap (public at astrbot.featurebase.app/roadmap) hints at WhatsApp support, which would dramatically expand its reach outside Asia. The MCP integration is still maturing, and deeper agent orchestration via the subagent_orchestrator.py module suggests multi-agent workflows are coming.

The main risk is concentration. One developer with 3,000+ commits out of roughly 3,400 total creates a bus factor problem. The AstrBotDevs organization exists, and contributors are growing, but the project's velocity depends heavily on Soulter's continued involvement.

The competitive pressure is real too. Dify raised significant funding and is building out its own platform integrations. Coze (from ByteDance) offers a similar multi-platform bot builder with more resources behind it. AstrBot's advantage is its open-source flexibility and its existing penetration into the QQ and WeChat ecosystem.