Deep Agents: LangChain's Batteries-Included Agent Harness

How LangChain reverse-engineered the patterns behind Claude Code, Manus, and Deep Research, then shipped an open-source, vendor-agnostic framework that gives any LLM the same superpowers.

- Deep Agents packages the four patterns that make autonomous agents effective (planning, subagents, filesystem access, smart prompting) into a single MIT-licensed harness built on LangGraph.

- The framework is provider-agnostic by design, working with Claude, GPT, Gemini, and 100+ other models out of the box.

- Pluggable backends let you swap between in-memory state, local disk, LangGraph Store, and sandboxed environments without changing agent code.

- Auto-summarization and context management allow agents to run for hours across dozens of steps without overflowing their context window.

The Problem with Shallow Agents

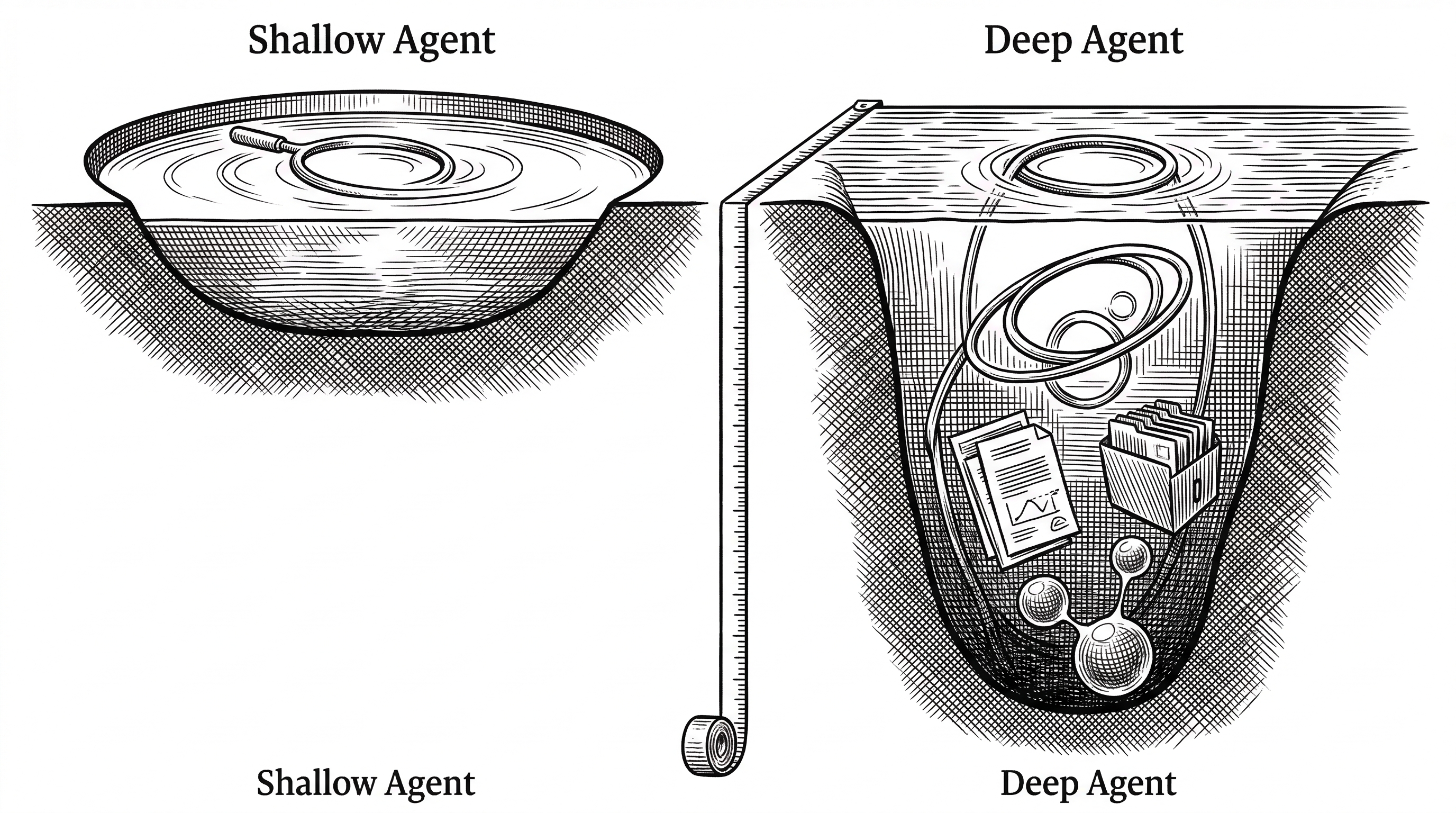

The dominant agent architecture is disarmingly simple: an LLM calling tools in a loop. You pass a user message in, the model decides which tool to call, the tool returns a result, and the model decides again. Repeat until done.

This works for simple tasks. Ask it to look up the weather, format a date, or query a database, and you get a clean answer in one or two turns. But try asking it to research a topic, write a report, and publish it, and the loop collapses. The agent forgets what it already tried. It burns through context re-reading the same files. It has no way to delegate a subtask without losing its own train of thought.

Harrison Chase, CEO of LangChain, calls these "shallow agents." They loop, but they do not plan. They have hands, but no memory of what those hands already touched.

"Applications like Deep Research, Manus, and Claude Code have gotten around this limitation by implementing a combination of four things: a planning tool, sub agents, access to a file system, and a detailed prompt."

These four ingredients transform a shallow loop into something that can sustain complex, multi-step work over minutes or hours. LangChain's Deep Agents project packages all four into a single open-source harness that you can install with one command and customize as needed.

What Ships in the Box

Run pip install deepagents and you get a working agent in three lines of Python. That agent comes pre-loaded with eight tools, a detailed system prompt, and a middleware stack that handles the hard parts of long-running autonomy.

The built-in tools break into three categories. Planning gives the agent write_todos for task breakdown and progress tracking. Filesystem provides read_file, write_file, edit_file, ls, glob, and grep for reading and writing context. Shell access offers execute for running commands, with sandboxing available when the backend supports it.

Then there is the ninth tool, arguably the most important: task. This one spawns subagents. We will get to that shortly.

Planning: The Agent's Inner Monologue

When a Deep Agent encounters a complex request, the first thing it does is plan. The write_todos tool lets it break work into discrete steps, stored in a todos field on the agent's state. This is not a static list. The agent updates its plan as it works, checking off completed items and adding new ones as it discovers unexpected requirements.

The planning tool is implemented as middleware (TodoListMiddleware) that injects the current todo state into every model call. The agent always knows where it is in the plan. This pattern comes directly from observing how Claude Code and Manus handle multi-step tasks: they break work into chunks, track progress, and adapt.

Planning sounds like a small feature. In practice, it is the difference between an agent that spins in circles and one that methodically works through a twenty-step deployment.

The Architecture

At the center of Deep Agents sits create_deep_agent(), a factory function that assembles a compiled LangGraph graph. This is not a wrapper around LangGraph. It returns a real CompiledStateGraph that you can use with streaming, checkpointers, LangGraph Studio, or any feature in the LangGraph ecosystem.

The graph runs a standard tool-calling loop, but the middleware stack is where the real intelligence lives. Six middleware layers compose around the loop:

| Middleware | Purpose |

|---|---|

| TodoListMiddleware | Injects todo state into each model call for persistent planning |

| FilesystemMiddleware | Wires up the six file tools to the configured backend |

| SubAgentMiddleware | Adds the task() tool for spawning isolated subagents |

| SummarizationMiddleware | Auto-compacts conversations when token usage exceeds a threshold |

| AnthropicPromptCachingMiddleware | Optimizes token usage when running on Claude models |

| PatchToolCallsMiddleware | Fixes common model mistakes in tool call formatting |

You can add your own middleware after the defaults. The composable design means you can slot in rate limiting, logging, custom approval flows, or anything else without touching the core loop.

Subagent Spawning: Divide and Conquer

The task() tool is the feature that separates Deep Agents from simpler agent frameworks. When the main agent encounters a subtask that would pollute its context, it spawns an ephemeral subagent with its own isolated context window.

Each subagent gets its own system prompt, its own tools, and its own middleware stack. By default, subagents inherit the parent's tools, but you can override everything. The parent agent can launch multiple subagents concurrently in a single response, and each subagent runs its own full tool-calling loop until it completes.

When a subagent finishes, it returns exactly one message back to the parent. The parent never sees the subagent's intermediate steps. This is deliberate: context isolation is the entire point. A research subagent can read thirty files and execute a dozen shell commands without burning a single token in the parent's context window.

Defining a custom subagent looks like this:

agent = create_deep_agent(

subagents=[{

"name": "researcher",

"description": "Deep research on any topic",

"system_prompt": "You research topics thoroughly...",

"tools": [web_search, read_file],

"model": "openai:gpt-4o",

}]

)The main agent sees a description of each available subagent and decides when to delegate based on that description. A general-purpose subagent ships by default, giving every Deep Agent delegation capability out of the box.

The Filesystem as Extended Memory

The filesystem is not just a convenience feature. It is the agent's primary mechanism for managing information that does not fit in context. Large tool outputs get saved to files automatically. Research notes accumulate across steps. Artifacts like code, reports, and data persist between subagent calls.

What makes this powerful is the pluggable backend system. The same six file tools work identically regardless of where the files actually live.

| Backend | Storage | Use Case |

|---|---|---|

| StateBackend | In-memory (LangGraph state) | Short-lived agents, testing |

| StoreBackend | LangGraph Store | Cross-thread persistence |

| FilesystemBackend | Local disk | Development, local agents |

| SandboxBackend | Modal, Daytona, Deno | Isolated code execution |

| CompositeBackend | Route by path prefix | Mix backends per directory |

The CompositeBackend is particularly clever. You can route /workspace/ to a sandbox, /shared/ to a persistent store, and /tmp/ to in-memory state, all in the same agent.

Auto-Summarization: Running for Hours

Long-running agents inevitably hit context limits. Deep Agents solves this with SummarizationMiddleware, which watches token usage and automatically compacts the conversation when it crosses a threshold.

When summarization triggers, older messages are compressed by an LLM into a concise summary, and the full original history is offloaded to a file at /conversation_history/{thread_id}.md. The agent keeps working with a shorter context that still preserves the essential information from earlier turns.

You can also expose summarization as a tool via SummarizationToolMiddleware, letting the agent (or a human-in-the-loop flow) trigger compaction on demand. This combination of automatic and manual summarization is what enables agents to sustain complex work across dozens or even hundreds of steps.

The Prompt Engineering

Deep Agents ships with a carefully tuned base prompt that teaches the model how to behave as an effective autonomous agent. The prompt is opinionated and direct.

"Be concise and direct. Don't over-explain unless asked. NEVER add unnecessary preamble. Don't say 'I'll now do X' -- just do it."

The prompt covers core behavior, tool usage patterns, file reading best practices (pagination to prevent context overflow), progress updates for long tasks, and professional objectivity. It explicitly instructs the model to prioritize accuracy over validating the user's beliefs and to disagree respectfully when the user is incorrect.

This kind of prompt engineering matters more than it seems. Without it, models tend toward verbose, people-pleasing behavior that wastes tokens and produces worse results. The prompt transforms a generic language model into an effective tool-using agent.

Provider Agnostic by Design

Deep Agents defaults to Claude Sonnet 4.6, but the framework is built to work with any model that supports tool calling. Swapping providers is a one-line change:

# OpenAI

agent = create_deep_agent(model="openai:gpt-4o")

# Google

agent = create_deep_agent(model="google_genai:gemini-2.5-pro")

# Any model via init_chat_model

from langchain.chat_models import init_chat_model

agent = create_deep_agent(model=init_chat_model("bedrock:..."))This is the core differentiator against Claude Code and OpenAI's Codex CLI, which are locked to their respective providers. If you are building agents into a product, vendor lock-in is a real concern. Deep Agents lets you benchmark across providers, fall back between them, or use different models for different subagents.

The Competitive Landscape

Deep Agents exists in a crowded field. The project's README explicitly acknowledges its inspiration: "This project was primarily inspired by Claude Code, and initially was largely an attempt to see what made Claude Code general purpose, and make it even more so."

| Feature | Deep Agents | Claude Code | OpenAI Codex |

|---|---|---|---|

| License | MIT (free) | Proprietary ($200/mo) | Apache 2.0 |

| Model Lock-in | None (100+ providers) | Claude only | OpenAI only |

| Planning Tool | write_todos | Built-in TodoWrite | Limited |

| Subagents | task() with isolation | Subagent spawning | Not available |

| Filesystem | Pluggable backends | Direct host access | Sandboxed |

| Context Management | Auto-summarization | Conversation compaction | Limited |

| Primary Use | Embedded in products | Developer CLI tool | Coding assistant |

The strategic positioning is clear. Claude Code and Codex are tools you use directly. Deep Agents is a library you embed into your product. If you are building a research assistant, a content pipeline, a code review bot, or any agent-powered feature, Deep Agents gives you the same architectural patterns without tying you to a single vendor.

The CLI: Deep Agents as a Product

LangChain also ships a CLI that turns Deep Agents into a standalone coding assistant, similar to Claude Code. The CLI adds web search, remote sandboxes, persistent memory, and human-in-the-loop approval on top of the core library.

This dual nature matters. The library serves developers building agent-powered products. The CLI serves developers who want a coding assistant they can customize and control. Same engine, two very different interfaces.

The CLI installs via a single curl command and works out of the box. But because it is built on the same create_deep_agent() foundation, anything you learn configuring the CLI applies directly to your own agent code.

Security: Trust the LLM, Sandbox Everything Else

Deep Agents takes an explicit stance on security: "Trust the LLM model. The agent can do anything its tools allow. Enforce boundaries at the tool/sandbox level, not by expecting the model to self-police."

This is refreshingly honest. Instead of pretending the model will always follow safety instructions, the framework pushes security down to the infrastructure layer. The SandboxBackend runs file operations and shell commands in isolated containers via Modal, Daytona, or Deno. The v0.4 release made this a first-class feature with pluggable sandbox support.

For non-sandboxed deployments, the execute tool simply returns an error. The default is safe. You opt in to giving the agent system access, and when you do, you control exactly how much access through backend configuration.

Real-World Patterns

The examples/ directory ships six reference implementations that show Deep Agents handling genuinely complex workflows:

Deep Research uses subagents to parallelize web research, with a coordinator agent synthesizing findings into a structured report. Content Builder chains planning, file writing, and iteration to produce long-form content. Text-to-SQL connects agents to databases with natural language interfaces. NVIDIA Deep Agent demonstrates integration with NVIDIA's enterprise AI stack.

The pattern across all examples is the same: plan, delegate, write to files, summarize, report. The tools change. The workflow structure does not. This consistency is the framework's strongest argument for itself.

The Middleware Model

Under the surface, Deep Agents is really a middleware composition system. Every feature beyond the basic tool loop is implemented as middleware that wraps the model call.

This design has a subtle but important consequence. When you call create_deep_agent(), you are not configuring a monolithic system. You are composing a pipeline of independent, testable layers. Need human-in-the-loop approval for certain tools? Add HumanInTheLoopMiddleware. Want persistent memory across sessions? Add MemoryMiddleware. Want to define reusable agent skills? Add SkillsMiddleware.

Each middleware can read and modify the agent state, add or remove tools, transform messages, and intercept tool calls. This composability is what makes Deep Agents a framework rather than a product. The defaults are strong enough to use without customization, but the seams are clean enough to replace any part.

What the Name Means

The word "deep" is doing real work. It contrasts with "shallow" agents that loop without planning, forget what they tried, and cannot delegate. A deep agent plans ahead. It offloads information it does not need right now. It spawns specialists for focused work. It sustains coherent behavior across long task horizons.

Whether that depth comes from LangChain's framework or from wiring up the same patterns yourself is a choice. The contribution of Deep Agents is making that choice easy: install one package, call one function, and get a working deep agent that you can customize from there.

The repository is moving fast. Since its creation in July 2025, it has shipped four major releases, accumulated over 14,000 stars, and attracted more than 2,000 forks. The v0.4 release added sandboxing. The roadmap points toward even richer context management and deeper integrations with the LangChain ecosystem.

For teams building agent-powered products, Deep Agents offers something the proprietary alternatives cannot: the same battle-tested patterns, fully open, fully customizable, and free from vendor lock-in. The shallow agent era is over. The deep one has just begun.