DeerFlow: ByteDance's Middleware-Powered SuperAgent Harness That Actually Ships

Nine composable middlewares, on-demand skills, persistent sandboxes, and aggressive memory management turn LangGraph into a runtime that researches, codes, and creates for hours.

- DeerFlow transforms LangGraph into a production runtime using nine focused middleware classes that intercept every turn to prevent infinite loops, memory bloat, and unhandled errors.

- Every agent receives its own persistent virtual computer with isolated filesystem, shell execution, and Docker or Kubernetes backends that survive across multi-hour tasks.

- Skills load on demand from a directory of Python functions and Markdown workflows, keeping context windows small while enabling rich extensibility.

- Aggressive memory management through summarization, thread state, and filesystem offload allows agents to research, code, and produce artifacts reliably for hours.

Nine Middlewares That Keep Agents Alive

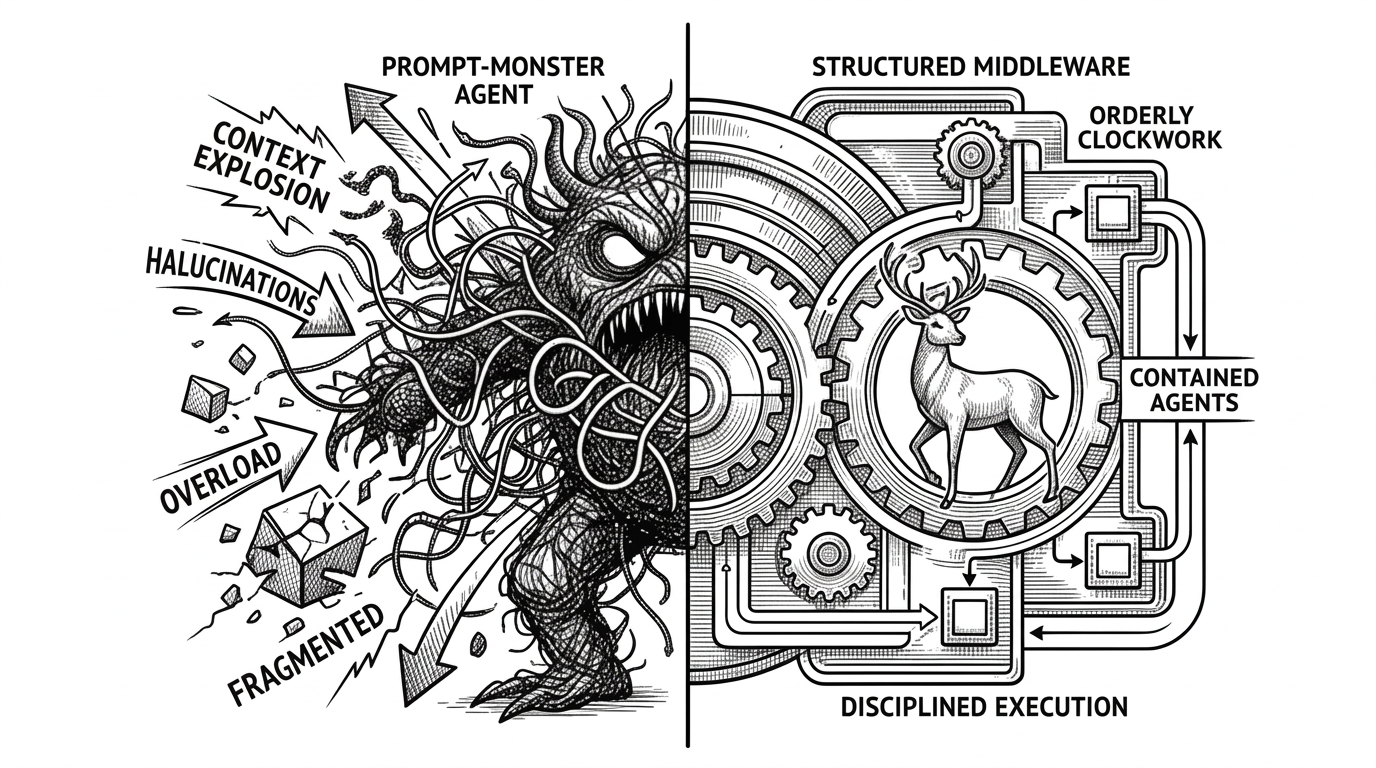

Most agent frameworks hand you a graph and hope for the best. DeerFlow ships a production runtime built around a clean architectural pattern: nine composable middleware classes that wrap the core LangGraph loop.

These layers intercept every agent turn to handle the failure modes that kill long-running agents: infinite loops, dangling tool calls, memory bloat, missing clarifications, untracked todos, and unhandled errors. Instead of cramming fixes into ever-larger system prompts or monolithic nodes, each concern gets its own focused class.

This pattern makes the system maintainable. Each middleware is a small, testable class with a single responsibility. The order of application is explicit and configurable. When something goes wrong in production, you know exactly which layer caught it.

“DeerFlow started as a Deep Research framework — and the community ran with it. That told us something important: DeerFlow wasn't just a research tool. It was a harness — a runtime that gives agents the infrastructure to actually get work done.”

From Deep Research to Full SuperAgent

DeerFlow began life inside ByteDance as a specialized deep-research tool. Version 1 excelled at web crawling, literature synthesis, and structured reporting. But users kept pushing the boundaries into coding, data analysis, slide creation, and full application workflows.

The team listened. On February 28, 2026, they released DeerFlow 2.0: a ground-up rewrite built on LangGraph 1.0. The new version ships as a complete opinionated runtime rather than a set of building blocks.

Your unwavering commitment and expertise have been the driving force behind DeerFlow's success.

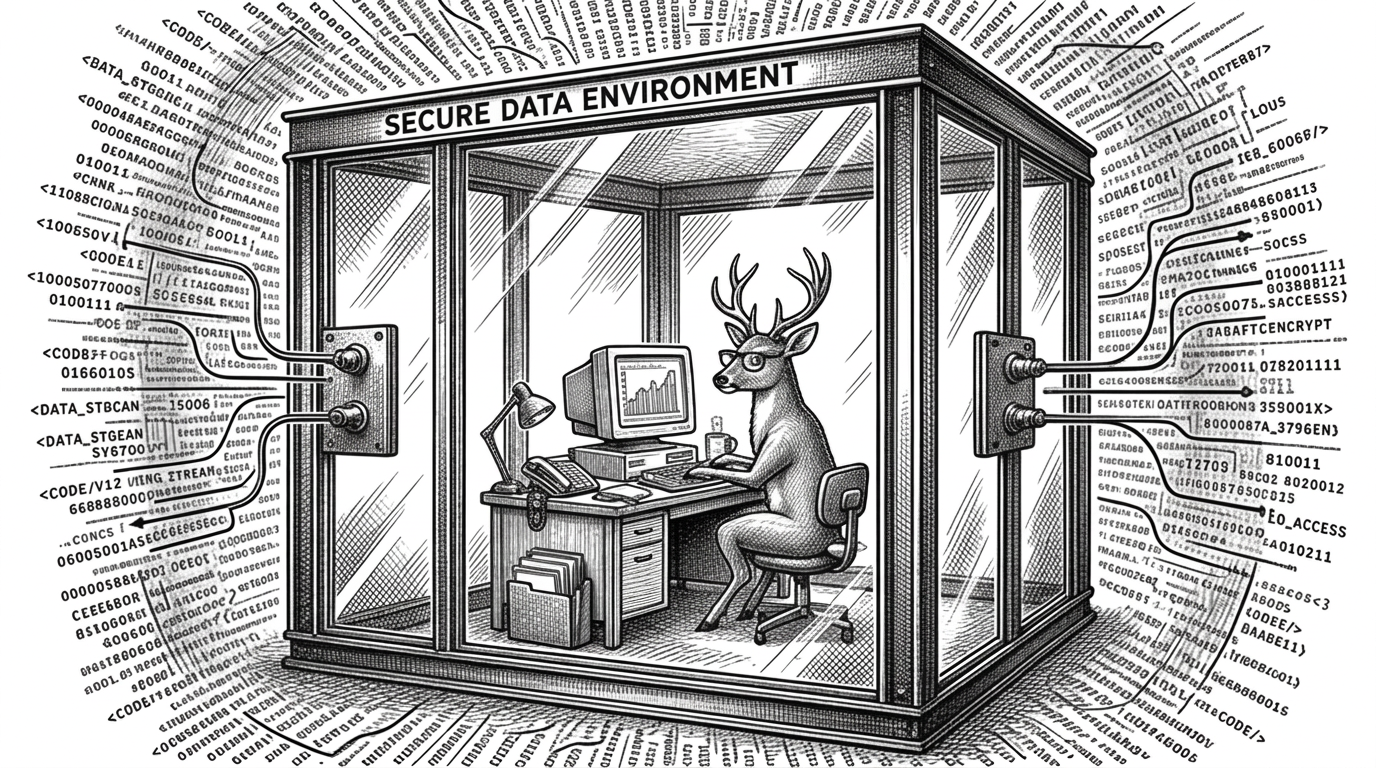

The Virtual Computer

The most transformative idea in DeerFlow is giving every agent its own safe computer. The sandbox abstraction provides an isolated filesystem, shell execution, and persistent workspace across tasks.

Backends include direct local execution, Docker containers, and Kubernetes via a provisioner. The community-contributed aio_sandbox brings improved async performance. Files live in predictable locations like /mnt/user-data/workspace and /mnt/user-data/outputs.

This design solves the real pain of agents that need to read, write, and execute over long sessions. The agent can build projects incrementally, maintain state across hours, and produce tangible artifacts without polluting the host environment.

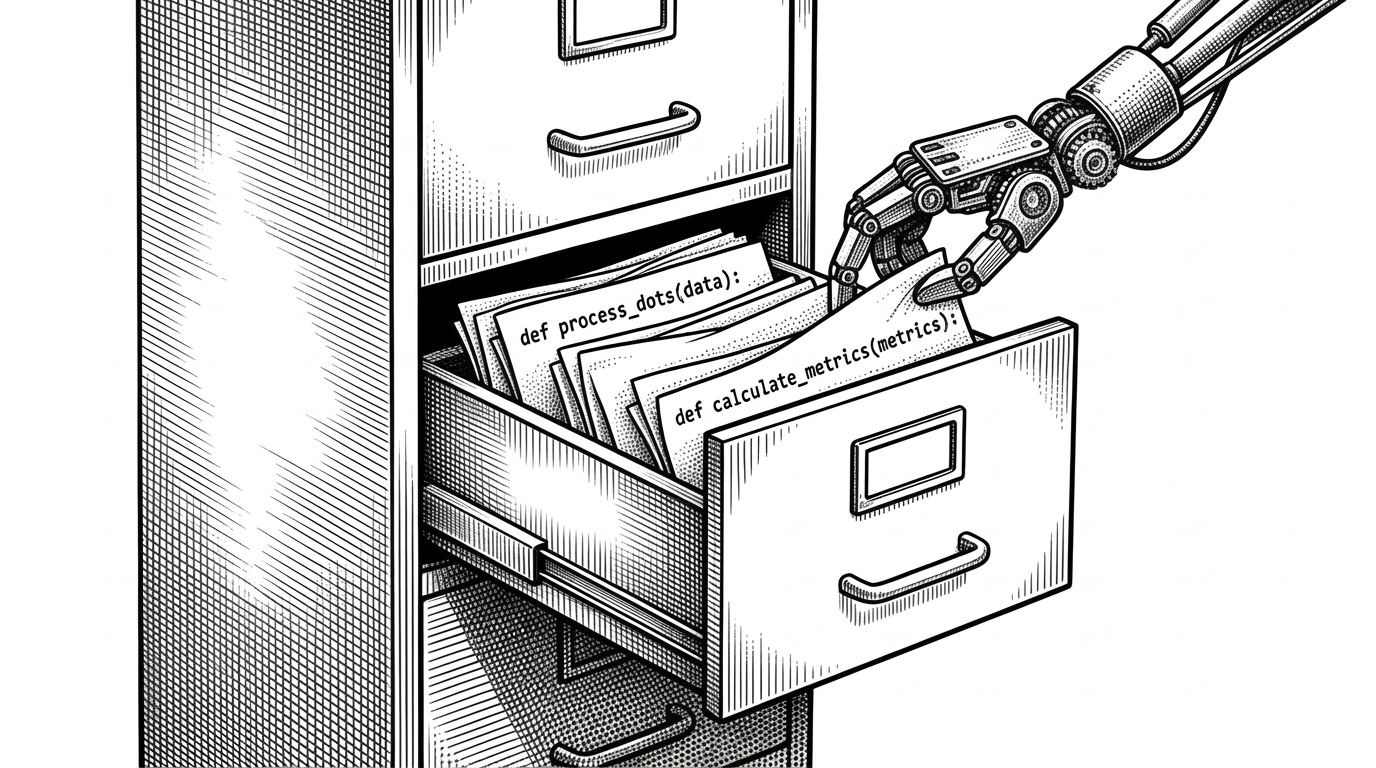

Skills as First-Class Extensibility

Traditional tool calling forces you to define everything up front. DeerFlow treats skills as first-class citizens: drop Python functions or structured Markdown workflows into a directory, and the loader automatically validates, parses, and registers them as tools.

Skills load progressively. Only the capabilities needed for the current task enter the context window. This keeps token usage under control even for local or smaller models.

Memory That Survives Hours

Long-running agents die from context bloat. DeerFlow counters this with a dedicated memory pipeline: short-term thread state, aggressive summarization of completed subtasks, long-term persistent memory of user preferences, and offloading of artifacts to the filesystem.

Dedicated documentation details these improvements, born from hard lessons in the v1 era.

How DeerFlow Compares

DeerFlow is not another lightweight orchestration library. It is an opinionated runtime designed for production-grade, long-horizon autonomous work.

| Project | Long-running Safety | Context Management | Sandbox / FS | Extensibility | Opinionated Runtime |

|---|---|---|---|---|---|

| DeerFlow | 9 middleware layers + loop detection | Progressive skills, summarization, FS offload | Native Docker/K8s with persistent FS | Skills directory + MCP | Yes — full harness |

| CrewAI | Limited | Basic | User-provided | Role-based crews | Partial |

| AutoGen | Conversation-focused | Basic group chat | User-provided | High via code | No |

| Raw LangGraph | Depends on implementation | Manual | None built-in | Maximum flexibility | No |

| MetaGPT | Role simulation | Structured workflow | Limited | Software company roles | Yes for coding |

Under the Hood

A lead agent decomposes high-level tasks, spawns specialized sub-agents with scoped context and tools, and synthesizes their outputs. Built-in agents include a capable bash executor. The task tool lets agents create structured subtasks dynamically.

Reflection and resolver components support self-correction. MCP support brings modern tool discovery and authentication flows. The FastAPI gateway plus WebSocket and IM channel integrations make it easy to interact with the system from Slack, Telegram, or terminal.

The codebase is unusually well-documented for an agent project, with dedicated files on architecture, memory improvements, configuration, and more. This reflects ByteDance's commitment to making sophisticated agent infrastructure approachable.