Hugging Face Skills: The Rosetta Stone for AI Coding Agents

One repo, 12 skills, four coding agents. Hugging Face wrote the instruction manual that lets Claude Code, Codex, Gemini CLI, and Cursor fine-tune models, build datasets, and run GPU jobs without any of them needing to learn each other's language.

- Hugging Face Skills packages ML expertise into portable folders that work natively with Claude Code, OpenAI Codex, Google Gemini CLI, and Cursor through a single publish step.

- The repo already ships 12 production-grade skills covering model training, dataset creation, evaluation, experiment tracking, and paper publishing on Hugging Face infrastructure.

- By adopting the open Agent Skills standard rather than inventing a proprietary format, Hugging Face positioned itself as the default skill provider across every major coding agent.

- Skills turn coding agents from general-purpose assistants into specialized ML engineers that can submit GPU jobs, monitor training runs, and push models to the Hub.

The Problem Nobody Talks About

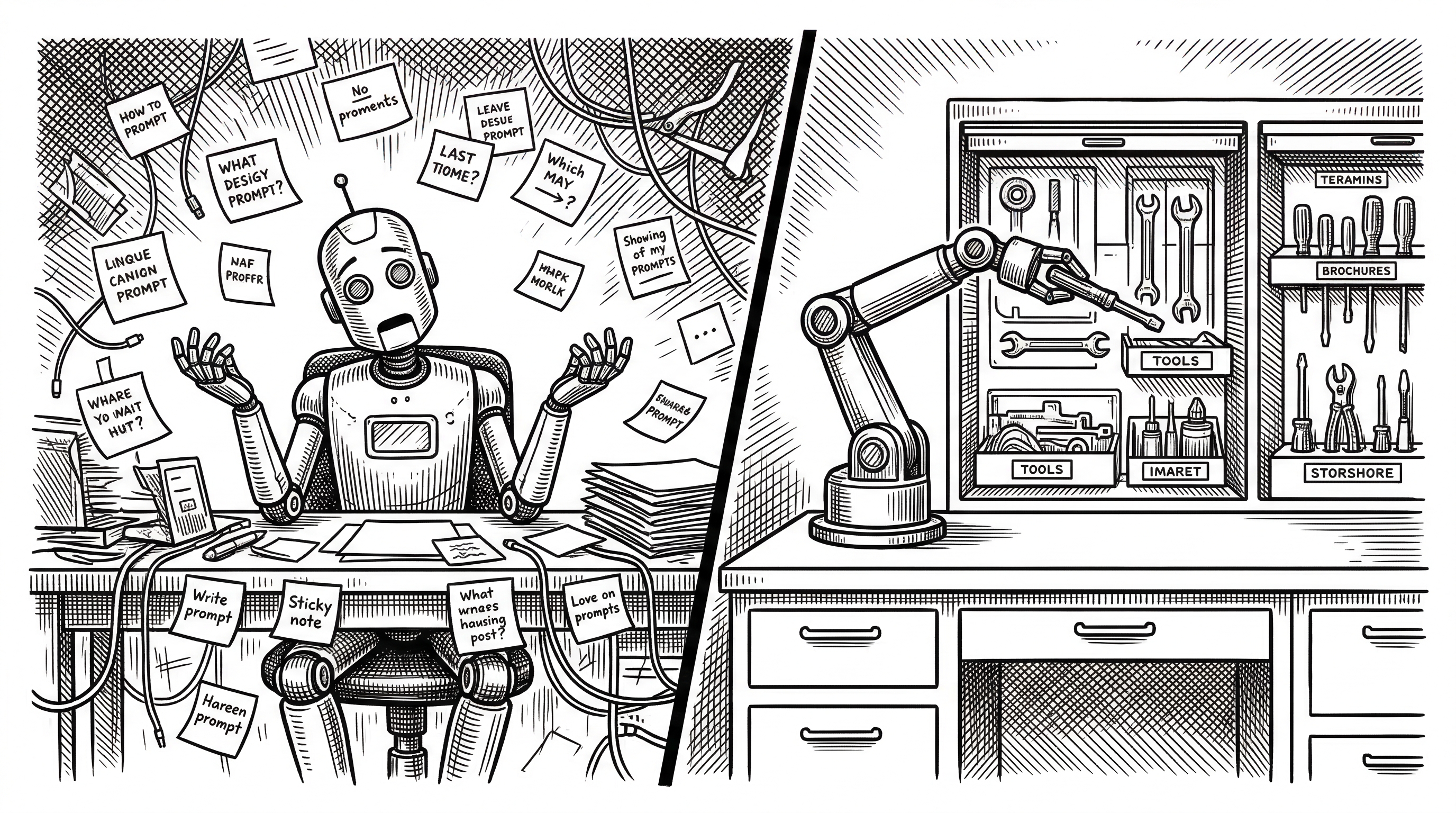

Every AI coding agent has a dirty secret. They are brilliant generalists but terrible specialists. Ask Claude Code to fine-tune a 7B parameter model and it will write you a plausible training script. But it will not know which GPU to pick for that model size. It will not set up Hub authentication correctly. It will not know when to use LoRA versus full fine-tuning. And it will definitely not know about the ephemeral nature of Hugging Face Jobs, where forgetting to push your model to the Hub means losing everything.

The gap between "can write Python" and "can run an ML workflow end-to-end" is enormous. It is the difference between a medical student who read a textbook and a surgeon who has done a thousand operations.

Hugging Face Skills bridges that gap. Not by making coding agents smarter, but by giving them the playbook that an experienced ML engineer carries in their head.

What Is a Skill, Really?

Strip away the marketing and a skill is just a folder. Inside that folder sits a SKILL.md file with some YAML frontmatter (name and description) followed by detailed instructions that a coding agent follows while the skill is active. Alongside that file you will find helper scripts, reference documents, and templates.

The model trainer skill, for example, includes ready-to-run Python scripts for SFT, DPO, and GRPO training, a cost estimation tool, a dataset inspector, and pages of guidance about hardware selection, authentication, and monitoring. When Claude Code loads this skill, it is not guessing. It is following a tested recipe.

skills/hugging-face-model-trainer/

SKILL.md # Instructions + YAML metadata

scripts/

train_sft_example.py # Production SFT template

train_dpo_example.py # DPO alignment template

estimate_cost.py # GPU cost calculator

dataset_inspector.py # Data validation

references/

training_methods.md # Method selection guide

unsloth.md # Memory-efficient alternativeThis structure is deceptively simple. The power is not in the format. It is in what Hugging Face chose to encode: the hard-won operational knowledge that separates a toy experiment from a production training run.

The Cross-Platform Play

Here is where things get interesting. Anthropic calls them "skills." OpenAI calls them "agent skills." Google calls them "extensions." Cursor has its own plugin format. Four platforms, four formats, one massive fragmentation problem for anyone trying to package ML knowledge.

Hugging Face solved this with a single publish.sh script. Write your skill once as a folder with a SKILL.md file. Run the script. Out come manifests for every supported platform: .claude-plugin/marketplace.json for Claude Code, .agents/skills/ directories for Codex, gemini-extension.json for Gemini CLI, and .cursor-plugin/plugin.json plus .mcp.json for Cursor.

The repo also ships a fallback AGENTS.md file that bundles all skill instructions into one document. If your coding agent does not support any skill format at all, you can just paste in the instructions manually. Pragmatic and ugly, exactly the way infrastructure should be.

The adoption of the open Agent Skills standard was a shrewd move. Rather than inventing a proprietary Hugging Face format, the team built on the specification that Anthropic published in late 2025 and that 26 tools have since adopted. This means any new coding agent that supports the standard automatically gets access to Hugging Face's skills.

The Twelve Skills

The repo ships with twelve skills that cover the full lifecycle of working with the Hugging Face Hub. They are not demos. They are production workflows that Hugging Face staff use internally.

| Skill | What It Does | Why It Matters |

|---|---|---|

| hf-cli | Download models, upload files, manage repos, run compute jobs | The Swiss Army knife. Synced automatically from huggingface_hub. |

| model-trainer | SFT, DPO, GRPO fine-tuning on cloud GPUs via TRL | The flagship skill. Includes Unsloth for 60% less VRAM. |

| vision-trainer | Object detection (DETR, RTDETRv2) and image classification | Extends training beyond text to computer vision. |

| datasets | Create repos, define configs, stream row updates, SQL queries | Dataset creation without writing boilerplate. |

| dataset-viewer | Explore, query, extract data via REST API. Zero Python deps. | Quick data inspection before committing to training. |

| evaluation | Add eval results to model cards, run custom evals with vLLM | Standardized benchmarking and leaderboard integration. |

| jobs | Run Python scripts on HF infrastructure, manage scheduled jobs | Cloud GPU access without DevOps overhead. |

| trackio | Log metrics, real-time dashboards synced to HF Spaces | Experiment tracking that lives alongside your models. |

| paper-publisher | Create paper pages, link to models, claim authorship | Research publishing integrated into the ML workflow. |

| gradio | Build web UIs, components, chatbots | Demo creation for any model on the Hub. |

| transformers.js | ML inference in JavaScript/TypeScript with WebGPU/WASM | Browser-side ML without a Python backend. |

| tool-builder | Build reusable scripts for HF API operations | Chain API calls and automate repeated tasks. |

The "We Got Claude to Fine-Tune a Model" Moment

The blog post that put Skills on the map was Hugging Face's December 2025 demo where they used Claude Code with the model trainer skill to fine-tune an open-source LLM end-to-end. Not just generate a training script. Actually submit the job to cloud GPUs, monitor progress with Trackio, and push the finished model to the Hub.

"We gave Claude the ability to fine-tune language models. Not just write training scripts, but to actually submit jobs to cloud GPUs, monitor progress, and push finished models to the Hugging Face Hub."

The skill taught Claude everything a human ML engineer would know: which GPU to pick for a given model size, how to configure Hub authentication, when to use LoRA versus full fine-tuning, and critically, how to handle the ephemeral nature of cloud training where results vanish if you do not push them.

Sionic AI followed up with a post about running over 1,000 ML experiments per day using Claude Code Skills. Researchers used the framework to write training scripts, debug CUDA errors, and search hyperparameters overnight. The skill system turned Claude from a code assistant into an autonomous ML research partner.

Under the Hood: MCP and the Live API Layer

Skills are not just static instructions. The repo includes an MCP server configuration that connects coding agents to the Hugging Face Hub API in real time. When Claude Code or Cursor activates a skill, they can call live tools like hf_jobs() to submit GPU training runs, hf_whoami() to check authentication, or hf_doc_search() to query documentation.

This is the critical piece that separates Skills from a simple prompt injection. The instructions tell the agent what to do. The MCP server gives it the hands to do it. A model training skill can instruct Claude to submit a job, and the MCP server provides the actual API endpoint to make it happen.

// .mcp.json

{

"mcpServers": {

"huggingface-skills": {

"url": "https://huggingface.co/mcp?login"

}

}

}The ?login parameter triggers OAuth authentication, so the coding agent gets scoped access to your Hugging Face account. No API keys pasted into chat. No tokens hardcoded in scripts. Just a secure handshake between your agent and the Hub.

Why Not Just Write Better Prompts?

Fair question. If skills are "just folders with markdown," why not maintain your own prompt library? Three reasons make the structured approach win.

First, discoverability. When you install the Hugging Face skills marketplace in Claude Code, the agent knows what is available and when to activate each skill based on the YAML metadata. You say "train a model" and the agent loads the right instructions automatically. A prompt library requires you to remember what you have and paste it in at the right moment.

Second, maintenance. The hf-cli skill is automatically synced from the huggingface_hub repository via CI. When the Hub API changes, the skill updates within hours. Your custom prompts rot the moment the API moves.

Third, scripts. Skills include executable code. The model trainer skill ships with PEP 723 inline-dependency scripts that run with a simple uv run. Your prompt library cannot include a cost estimation calculator that actually runs.

The Competitive Landscape

Hugging Face is not the only player in agent skills. The VoltAgent awesome-agent-skills repo aggregates over 500 community skills with 6,900+ stars. Vercel runs Skills.sh, a directory and leaderboard for skill packages. The Antigravity project bundles 868+ skills installable with a single npx command.

But Hugging Face has a structural advantage none of these can match: it owns the platform the skills target. When the model trainer skill calls hf_jobs(), that is a first-party integration. When it references Trackio dashboards synced to HF Spaces, that is an owned surface. Every skill in the repo drives usage of Hugging Face infrastructure.

| Project | Skills Count | Stars | Strength |

|---|---|---|---|

| huggingface/skills | 12 (curated) | 9.2k | First-party ML platform integration, MCP server |

| VoltAgent awesome-agent-skills | 500+ (community) | 6.9k | Breadth and community contributions |

| Antigravity | 868+ (bundled) | N/A | One-command install across 10+ agents |

| Skills.sh (Vercel) | Directory | N/A | Discovery, telemetry-based rankings |

Who Built This

The repo is primarily the work of burtenshaw (101 commits), with significant contributions from evalstate (37 commits) and hanouticelina (12 commits). The project launched in November 2024 and has maintained a rapid pace, with commits landing as recently as March 2026.

The contributor list also includes names from the broader Hugging Face ecosystem: abidlabs (Gradio creator), Wauplin (huggingface_hub maintainer), and cfahlgren1 (HF Spaces team). This is not a side project. It has the fingerprints of core Hugging Face infrastructure across the company.

The Business Logic

Hugging Face is giving away the instructions to drive usage of the paid infrastructure. Every model training skill invocation means a GPU job on Hugging Face compute. Every dataset skill invocation means data stored on the Hub. Every experiment tracking skill invocation means a Trackio dashboard on HF Spaces.

The Pro, Team, and Enterprise plans required for Jobs access are the real monetization surface. Skills lower the barrier so dramatically that an engineer who would have spent a day setting up training infrastructure now burns through GPU credits in minutes. It is the AWS playbook applied to ML: make the on-ramp free, charge for the highway.

"Use the HF model trainer skill to estimate the GPU memory needed for a 70B model run."

What Is Missing

The repo has clear gaps. There is no skill for inference deployment. You can train a model and push it to the Hub, but deploying it as an Inference Endpoint requires manual configuration. Given that deployment is the step where most ML projects stall, this feels like a notable omission.

There is also no skill for Spaces deployment beyond Gradio. If you want to deploy a Next.js or Streamlit frontend, you are on your own. The evaluation skill supports vLLM and lighteval but not the growing ecosystem of evaluation frameworks like Eleuther's lm-evaluation-harness.

Community contributions are light. Most of the 555 forks have not resulted in pull requests. The skill authoring guide exists but the barrier to contributing a production-quality skill is high when the existing ones set such a polished standard.

Where This Goes

The trajectory is clear. Skills are becoming the distribution channel for ML platform features. When Hugging Face ships a new product, the corresponding skill will be how most developers first experience it. The hf-cli skill is already auto-synced from the huggingface_hub repo, meaning CLI improvements propagate to every coding agent within hours.

The Agent Skills standard is still young, but 26 tools have adopted it. If the standard holds, we are looking at a future where platform vendors compete not just on features but on the quality of their skill packages. Hugging Face got there first with the most valuable vertical: machine learning.