OpenSandbox: Alibaba Open-Sources the Infrastructure That Makes AI Agents Safe to Run

Multi-language SDKs, unified sandbox APIs, and Docker/Kubernetes runtimes for coding agents, browser automation, evaluation, and RL training. One platform, every language, any scale.

- OpenSandbox is the first open-source sandbox platform to ship production-ready SDKs in five languages (Python, TypeScript, Java/Kotlin, C#/.NET) with a unified API contract, letting teams adopt it regardless of their stack.

- A clean four-layer architecture separating SDKs, OpenAPI specs, runtime orchestration, and sandbox instances means you can swap Docker for Kubernetes without changing a line of application code.

- Strong isolation via gVisor, Kata Containers, and Firecracker microVMs gives enterprises the security guarantees they need to let AI agents execute arbitrary code in production.

- Alibaba open-sourced the same internal infrastructure they use for large-scale AI workloads, making self-hosted, vendor-lock-in-free sandboxing viable for the first time.

The Agent Execution Problem Nobody Solved Cleanly

Every AI agent needs a place to run code. Whether it is a coding assistant generating Python, a browser agent scraping the web, or an RL trainer running thousands of episodes, the agent needs an isolated environment where it can execute without risking the host system.

Until now, teams had two options. Pay per-second fees to a proprietary sandbox service like E2B or Daytona. Or cobble together Docker scripts, custom APIs, and ad-hoc security. The first approach creates vendor lock-in and recurring costs. The second creates maintenance nightmares and security gaps.

On March 1, 2026, Alibaba dropped a third option: OpenSandbox. It hit 3,845 GitHub stars in 72 hours. Two weeks later it crossed 8,300. The speed of adoption tells you how badly the community wanted this.

What OpenSandbox Actually Is

OpenSandbox is a general-purpose sandbox platform for AI applications. That sentence from the README undersells it. What Alibaba actually shipped is a complete infrastructure layer for managing the lifecycle of isolated execution environments at any scale.

You install a server. You call an SDK method. A container spins up with a Go-based execution daemon injected inside. You run commands, write files, execute code across multiple languages, and tear it down when you are done. The whole lifecycle is managed through clean async APIs.

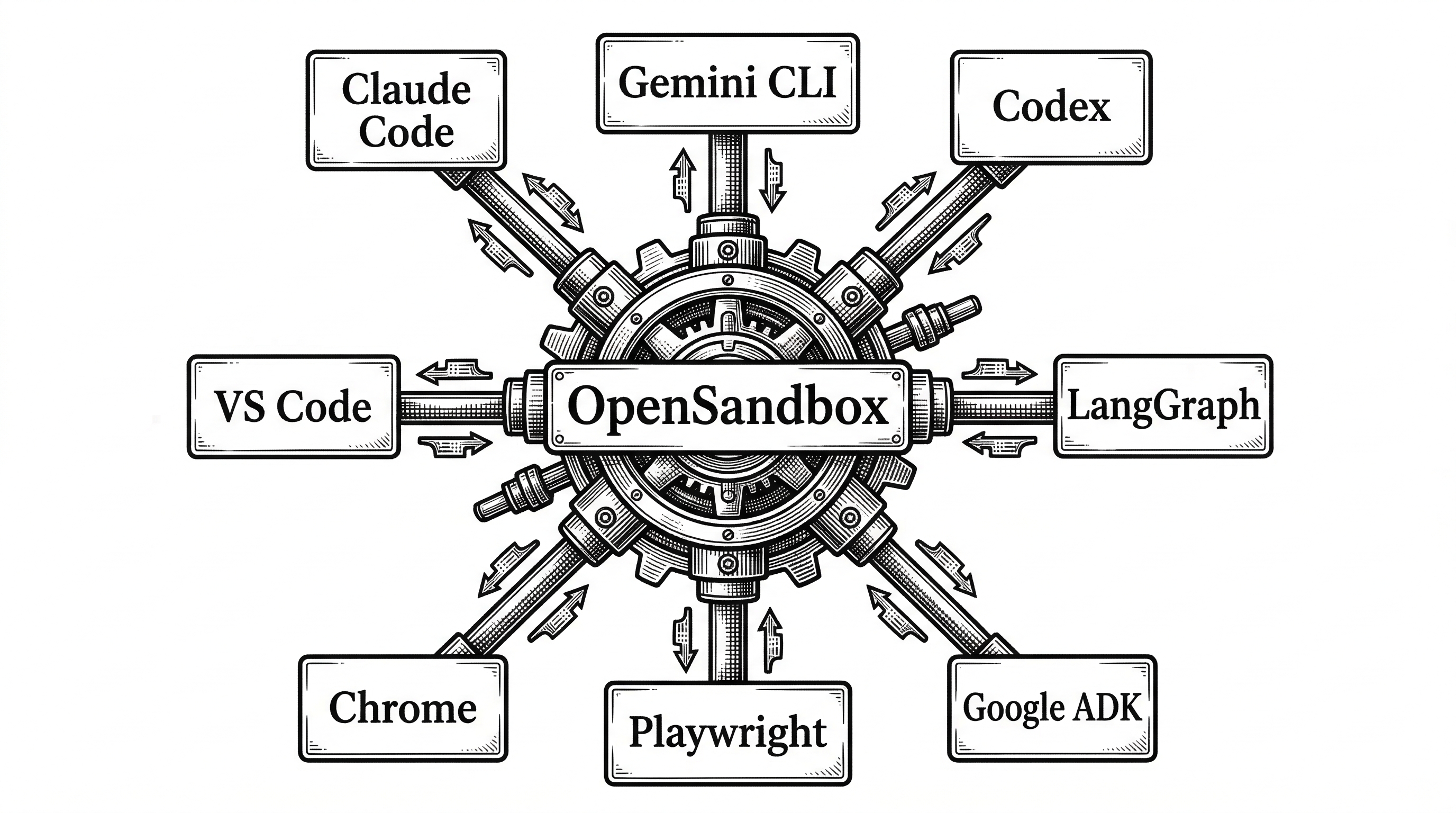

The platform covers four primary scenarios: coding agents (Claude Code, Gemini CLI, Codex), browser and GUI automation (Chromium, Playwright, VNC desktops), AI code execution (stateful Jupyter-backed interpreters), and reinforcement learning training (isolated environments with checkpoint management).

The Four-Layer Architecture

OpenSandbox's architecture is the most interesting thing about it. It is not a monolith. It is four cleanly separated layers, each with a distinct responsibility. This separation is what makes it possible to swap runtimes, add SDK languages, and scale independently.

Layer 1: Multi-Language SDKs

Five SDKs ship today: Python, TypeScript, Java/Kotlin, C#/.NET, with Go on the roadmap. Every SDK provides the same four core abstractions: Sandbox (lifecycle), Filesystem (file CRUD), Commands (shell execution), and CodeInterpreter (stateful multi-language code execution).

The Python SDK is the most mature, with full async/await support and a clean API. Creating a sandbox, running code, and tearing it down takes about ten lines:

from opensandbox import Sandbox

from code_interpreter import CodeInterpreter, SupportedLanguage

sandbox = await Sandbox.create(

"opensandbox/code-interpreter:v1.0.2",

entrypoint=["/opt/opensandbox/code-interpreter.sh"],

env={"PYTHON_VERSION": "3.11"},

)

async with sandbox:

result = await sandbox.commands.run("echo 'Hello OpenSandbox!'")

interpreter = await CodeInterpreter.create(sandbox)

output = await interpreter.codes.run(

"import sys; print(sys.version)",

language=SupportedLanguage.PYTHON,

)

await sandbox.kill()The consistency across languages is deliberate. A team with a Python backend and a TypeScript frontend can use the same mental model for both. The SDKs are published to PyPI, npm, Maven, and NuGet respectively.

Layer 2: OpenAPI Specifications

This is the layer that makes OpenSandbox more than a tool. It is a protocol. Two OpenAPI specs define the entire contract between SDKs and runtimes.

The Sandbox Lifecycle Spec covers creating, listing, pausing, resuming, renewing, and destroying sandboxes. The Sandbox Execution Spec (implemented by the execd daemon) covers commands, filesystem operations, code interpretation, and metrics streaming.

Because the specs are formal, anyone can implement a new runtime or SDK without touching existing code. This is the same approach that made Kubernetes extensible: define the contract, let implementations compete.

Layer 3: Runtime Orchestration

The server is a Python FastAPI application that implements the lifecycle spec. It supports two runtime backends: Docker for local development and Kubernetes for production scale.

The Docker runtime offers two networking modes: host mode for single-instance simplicity, and bridge mode for isolated networking with HTTP routing. It handles image pulling, container creation, execd injection, resource quota enforcement, and automatic cleanup on TTL expiration.

The Kubernetes runtime is where things get serious. It ships as a full Go-based operator with Custom Resource Definitions, scheduling, and auto-scaling. This is not a wrapper around kubectl. It is a proper controller-runtime operator that manages sandbox pods as first-class Kubernetes resources.

"OpenSandbox provides multi-language SDKs, unified sandbox APIs, and Docker/Kubernetes runtimes. It scales from individual developer laptops to enterprise-grade clusters, eliminating configuration drift as workloads move from development to production."

Layer 4: Sandbox Instances

Each sandbox is a container with the Go-based execd daemon injected at startup. This daemon is the bridge between the outside world and the sandbox interior. It exposes a local HTTP API for commands, file operations, code execution, and metrics.

For code interpretation, execd talks to Jupyter kernels running inside the container. This gives you stateful execution: variables persist across code blocks within a session, just like a notebook. The interpreter supports Python, JavaScript, Java, Go, and Bash out of the box.

Security: The Make-or-Break Feature

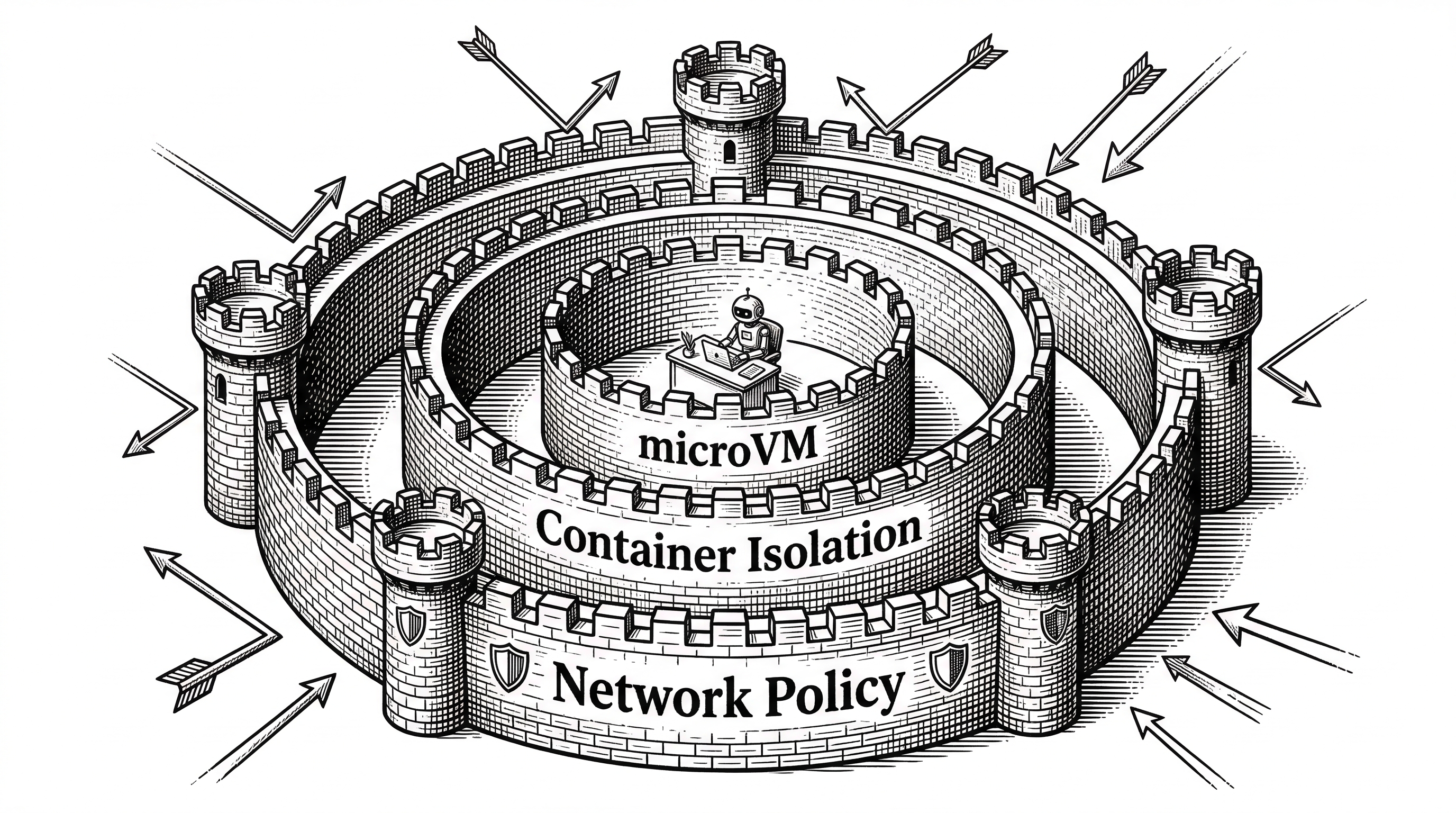

Letting an AI agent execute arbitrary code is inherently dangerous. OpenSandbox addresses this at multiple levels, and the approach is more thorough than most alternatives.

At the container level, it supports three secure runtime technologies: gVisor (Google's application kernel), Kata Containers (lightweight VMs), and Firecracker microVMs (the same technology behind AWS Lambda). Each provides a different tradeoff between performance and isolation strength.

At the network level, the ingress gateway controls inbound traffic with multiple routing strategies. The egress controller enforces per-sandbox outbound network policies. You can lock down which external services each sandbox can reach.

At the resource level, CPU, memory, and GPU quotas are enforced per sandbox. TTL-based automatic expiration prevents runaway containers. API key authentication protects the management plane.

This layered security model is what separates OpenSandbox from "just run it in Docker" approaches. Enterprises reviewing agent platforms will look for exactly these guarantees.

The Competitive Landscape

OpenSandbox enters a market that already has established players. Understanding where it fits requires looking at the tradeoffs each platform makes.

| Platform | Hosting | Isolation | SDKs | Pricing | Best For |

|---|---|---|---|---|---|

| OpenSandbox | Self-hosted | gVisor / Kata / Firecracker | Python, TS, Java, C#, Go* | Free (Apache 2.0) | K8s-scale self-hosted deployments |

| E2B | Managed cloud | Firecracker microVMs | Python, TS | Per-second billing | Ephemeral execution, security-first |

| Daytona | Managed cloud | Docker containers | Python, TS | Per-second billing | Desktop automation, fast cold starts |

| Modal | Managed cloud | Containers | Python | Per-second billing | GPU workloads, massive scale |

The key differentiator is ownership. E2B, Daytona, and Modal are managed services. You pay per second, and your workloads run on their infrastructure. OpenSandbox runs on your infrastructure, under your control, with no per-execution fees.

For startups iterating fast, a managed service might be simpler. For enterprises with compliance requirements, data residency constraints, or high-volume workloads where per-second billing adds up, self-hosted is the only viable path. OpenSandbox is the first production-ready open-source option for that path.

The multi-language SDK story also matters. E2B and Daytona support Python and TypeScript. OpenSandbox supports five languages. If your backend is Java or C#, you previously had no first-class sandbox SDK. Now you do.

Agent Integrations: The Ecosystem Play

A sandbox platform is only useful if agents can actually run inside it. OpenSandbox ships with pre-built integrations for the major coding agents and orchestration frameworks.

The examples directory reads like a who's-who of AI coding tools: Claude Code, Gemini CLI, OpenAI Codex CLI, Kimi CLI (Moonshot AI), and iFLow CLI all have dedicated sandbox configurations. Each includes a Dockerfile, entrypoint scripts, and documentation for running the agent inside OpenSandbox.

On the orchestration side, LangGraph and Google ADK integrations show how to wire sandbox operations into agent workflows. The LangGraph example implements a state-machine with fallback retry. The Google ADK example uses OpenSandbox as a tool provider.

Browser automation gets first-class support too. The Chromium sandbox includes VNC access for debugging and DevTools protocol access for programmatic control. The Playwright example shows headless scraping and testing. A full desktop environment sandbox provides VNC access to a complete Linux desktop.

The Protocol Bet

The most forward-looking aspect of OpenSandbox is not the code. It is the spec layer.

By publishing formal OpenAPI specifications for both lifecycle management and sandbox execution, Alibaba is betting on a protocol-first approach. Anyone can build a compatible runtime or SDK without forking the project. The Kubernetes SIG already has an agent-sandbox integration based on the OpenSandbox protocol.

This mirrors how the Container Runtime Interface (CRI) made Kubernetes runtime-agnostic, or how the Language Server Protocol (LSP) unified editor tooling. If the OpenSandbox protocol gains adoption, it could become the standard interface between AI agents and their execution environments.

The Enhancement Proposals (OSEPs) show where this is heading. OSEP-0001 defines FQDN-based egress control. OSEP-0003 adds persistent volume support. OSEP-0005 introduces client-side sandbox pools for sub-millisecond provisioning. OSEP-0007 proposes a fast sandbox runtime. These are not wishlist items. They are formal proposals with specifications and implementation plans.

Getting Started: Five Minutes to Your First Sandbox

The installation story is surprisingly clean for an infrastructure project. You need Docker and Python 3.10+. That is it.

# Install the server

uv pip install opensandbox-server

# Initialize configuration

opensandbox-server init-config ~/.sandbox.toml --example docker

# Start the server

opensandbox-serverThen install the SDK and code interpreter:

uv pip install opensandbox-code-interpreterFrom there you can create sandboxes, run commands, write files, and execute code using the async Python API shown earlier. The server manages container lifecycle automatically, including TTL expiration and cleanup.

For production, you swap the Docker runtime for Kubernetes by changing the configuration. The SDK code stays identical. This zero-code migration path from local development to production cluster is one of the most practical features of the architecture.

Inside the Codebase

The repository is well-organized for a project of this scope. At roughly 4 million lines across the main languages (Python at 2M, Go at 1M, C# at 365k, TypeScript at 280k, Kotlin at 278k, Java at 124k), it is a substantial codebase.

The project structure maps directly to the architecture layers:

sdks/ # Multi-language SDKs (Python, Java/Kotlin, TS, C#)

specs/ # OpenAPI specifications

server/ # FastAPI lifecycle server

kubernetes/ # Go-based K8s operator with CRDs

components/

execd/ # Go execution daemon injected into sandboxes

ingress/ # Traffic ingress proxy

egress/ # Network egress control

sandboxes/ # Container image definitions

examples/ # Integration examples

oseps/ # Enhancement proposals

tests/ # Cross-component E2E testsThe choice of Go for the execution daemon (execd) and Kubernetes operator makes sense. These are performance-critical, long-running components where Go's concurrency model and small binary size pay off. The server is Python/FastAPI because it is primarily an API gateway where development velocity matters more than raw throughput.

The test suite includes real end-to-end tests that spin up actual containers. The CI badge on the README confirms these run on every push to main. For infrastructure software, this level of testing discipline is essential.

What Is Missing

OpenSandbox is two weeks old at time of writing. Some gaps are expected.

There is no managed hosting option. If you want OpenSandbox, you run it yourself. For teams without Kubernetes expertise, the production deployment story is still incomplete. The roadmap promises a deployment guide, but it is not here yet.

The Go SDK is on the roadmap but not shipped. Given that the Kubernetes operator is written in Go, this is a notable absence. Teams building Go-based agent systems will need to use the REST API directly.

Persistent volumes are proposed in OSEP-0003 but not implemented. This means sandbox state is ephemeral. For use cases like long-running development environments, you would need to save and restore state manually.

Cold start times are not prominently benchmarked. Daytona advertises sub-90ms cold starts. E2B's Firecracker boots in under a second. OpenSandbox's Docker and Kubernetes runtimes will have different performance profiles, and the docs do not quantify them yet.

Why This Matters

The agent infrastructure stack is consolidating. OpenSandbox represents the moment when sandbox execution shifts from a proprietary service to open infrastructure, the same way Kubernetes did for container orchestration and Prometheus did for monitoring.

Alibaba's decision to open-source their internal sandbox infrastructure is strategic. More developers using OpenSandbox means more AI agent workloads running on compute infrastructure. Alibaba Cloud benefits either way. But the Apache 2.0 license means the community benefits too.

For teams building AI agents today, OpenSandbox removes the hardest infrastructure problem: giving agents a safe, scalable place to run. The multi-language SDKs lower the adoption barrier. The protocol-first design future-proofs the investment. And the self-hosted model eliminates the vendor risk that makes enterprise procurement teams nervous.

The project is young and moving fast. But the architecture is sound, the community response is strong, and the problem it solves is real. Keep an eye on this one.