Promptfoo: The Open-Source LLM Testing Tool That OpenAI Bought

A YAML config, a CLI command, and 50+ assertion types turned prompt engineering from vibes-based guessing into repeatable science. Then it added red teaming. Then OpenAI wrote a check.

- Promptfoo turns LLM testing into a declarative, repeatable workflow where a single YAML config drives prompts through multiple providers and grades the outputs with 50+ assertion types.

- Its red teaming engine generates adversarial attacks (prompt injection, jailbreaks, PII extraction) automatically, making security testing accessible to developers who are not security specialists.

- With 17,000+ stars, 130,000 monthly active developers, and adoption by 25%+ of the Fortune 500, it became the open-source standard for LLM evaluation before OpenAI acquired it in March 2026.

- The codebase is a well-structured TypeScript monolith with 60+ provider integrations, a React web UI, and CI/CD hooks that make it equally useful for solo developers and enterprise security teams.

The Problem: Prompt Engineering by Vibes

Before promptfoo, most teams tested their LLM prompts the same way: paste into a playground, eyeball the output, ship if it looked right. When something broke in production, they would tweak the prompt and repeat. No version control. No regression testing. No metrics.

This workflow might survive a weekend hack project. It does not survive a production system serving millions of users where a single bad prompt can leak customer data or generate harmful content.

Ian Webster and Michael D'Angelo saw this gap early. Webster had been building developer tools for years (his previous projects include an asteroid tracker used by NASA). In May 2023, he published the first version of promptfoo as a simple open-source framework for prompt engineering.

"Stop the trial-and-error approach. Start shipping secure, reliable AI apps."

The Core Idea: YAML In, Metrics Out

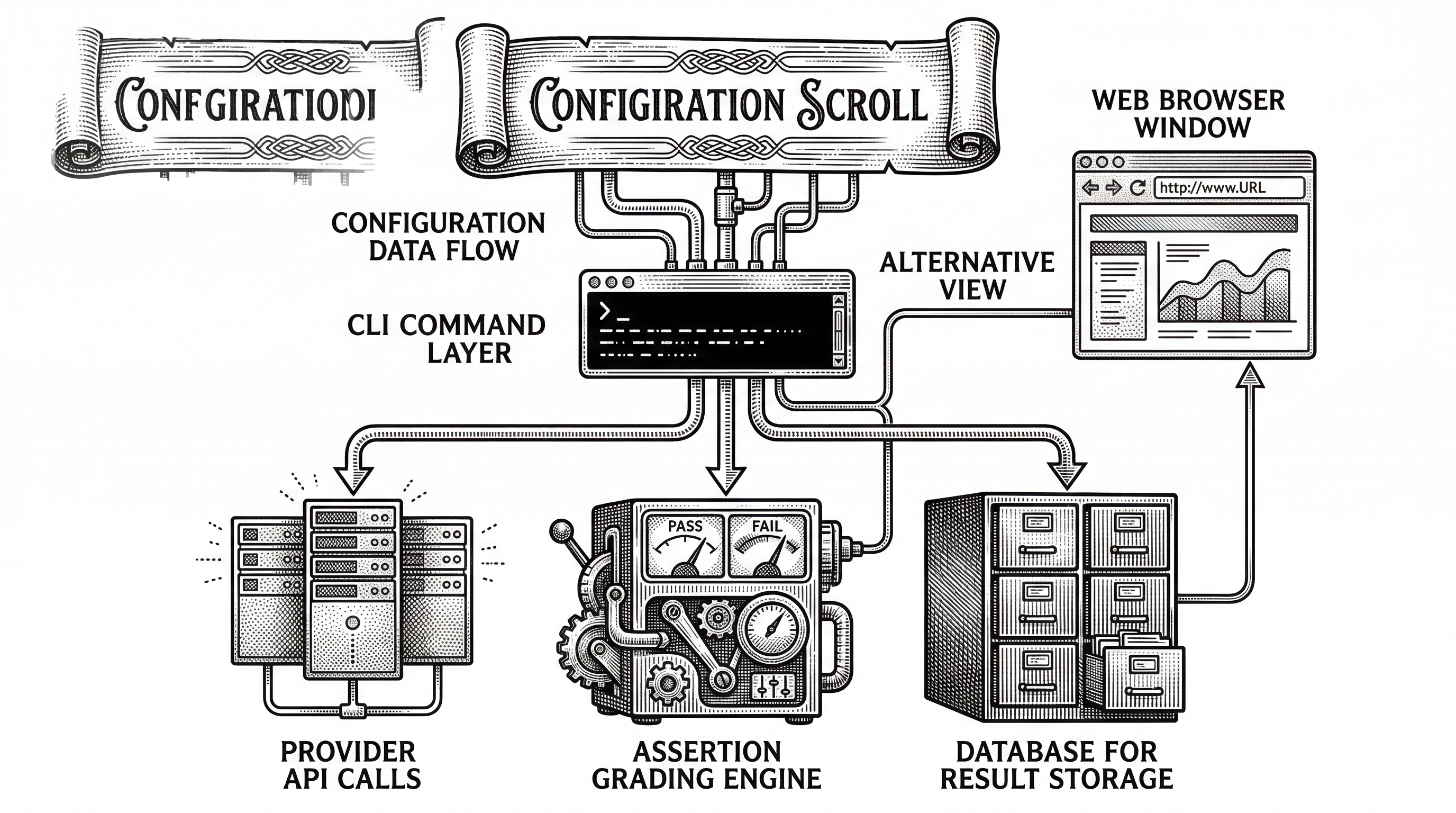

Promptfoo's central insight is that LLM testing should work like unit testing. You define inputs, expected behaviors, and assertions in a config file. The tool runs everything and reports pass/fail with scores.

The config file is called promptfooconfig.yaml. It has three sections: prompts (the templates you want to test), providers (the LLMs to run them against), and tests (the input variables and assertions to check). A minimal config looks like this:

prompts:

- "Translate '{{input}}' to Spanish"

providers:

- openai:gpt-4o

- anthropic:claude-3.5-sonnet

tests:

- vars:

input: "Hello world"

assert:

- type: contains

value: "Hola"

- type: llm-rubric

value: "Translation is natural and accurate"Run promptfoo eval and it calls both providers with your prompt, checks each output against both assertions, and shows you a comparison matrix. Run promptfoo view and you get a web UI where you can drill into every cell.

This is the fundamental loop. Everything else in the project is an extension of it.

Provider Abstraction: 60+ Integrations, One Interface

The src/providers/ directory is one of the most active parts of the codebase. It contains integrations for OpenAI, Anthropic, Google (Vertex and AI Studio), Azure, AWS Bedrock, Ollama, Groq, Cohere, DeepSeek, Hugging Face, Replicate, Together AI, and dozens more.

Each provider implements a common interface. You swap models by changing a string in your YAML. No code changes, no SDK juggling. This makes A/B testing across providers trivially easy. Want to see if Claude outperforms GPT-4o on your specific use case? Change one line and re-run.

providers:

- openai:gpt-4o

- anthropic:claude-3.5-sonnet

- ollama:llama3.1

- google:gemini-1.5-pro

- bedrock:us.anthropic.claude-3-5-sonnet-20241022-v2:0The abstraction extends to tool calls, function calling, structured outputs, and multi-modal inputs. Providers that support images, audio, or video work through the same config pattern. The local caching layer (backed by SQLite) means repeated runs skip the API call entirely, saving both time and money.

The Assertion Engine

Assertions are where promptfoo separates itself from simple playground testing. The framework ships with over 50 built-in assertion types, ranging from exact string matching to sophisticated LLM-as-judge evaluations.

Basic types include contains, equals, regex, is-json, and cost (checking that an API call stays under a dollar threshold). More advanced types use an LLM to grade the output: llm-rubric sends the output to a grading model with your rubric, model-graded-closedqa checks factual accuracy, and g-eval implements the G-Eval framework for nuanced quality scoring.

You can also write custom assertion functions in JavaScript, Python, or any language that outputs JSON. This means you can plug in domain-specific checks: SQL validation, medical terminology accuracy, code compilation, or whatever your application requires.

assert:

- type: contains

value: "SELECT"

- type: python

value: file://validate_sql.py

- type: llm-rubric

value: "Query is efficient and uses proper indexing"

- type: cost

threshold: 0.05Assertions can be weighted and combined into composite scores. A test case might require that the output contains valid JSON (hard pass/fail) while also scoring fluency on a 0-1 scale (soft metric). The results table shows both.

Red Teaming: Security Testing for Everyone

The red teaming module is what turned promptfoo from a developer tool into an enterprise security platform. Located in src/redteam/, it generates adversarial test cases designed to probe LLM applications for vulnerabilities.

The attack surface is broad. Promptfoo's plugins cover prompt injection, jailbreak attempts, PII extraction, harmful content generation, OWASP top-10 for LLMs, tool-use abuse, and more. The redteam/strategies/ directory includes techniques like multi-turn escalation, best-of-N jailbreaking, and indirect prompt injection through web content.

A red team scan starts with a simple config:

redteam:

plugins:

- prompt-injection

- harmful:privacy

- pii:direct

- overreliance

strategies:

- jailbreak

- multi-turnThe engine generates adversarial inputs, runs them against your target, and produces a vulnerability report with severity scores and remediation guidance. This is the functionality that caught the attention of Fortune 500 security teams.

For enterprises that cannot send proprietary prompts to external services, the entire pipeline runs locally. Your prompts, your data, and your test results never leave your machine. This privacy-first approach is a deliberate architectural choice, not an afterthought.

"Promptfoo has been used by more than 350,000 developers. 130,000 are active each month. Teams at more than 25% of the Fortune 500 rely on it."

Architecture: A TypeScript Monolith Done Right

The codebase is a TypeScript monolith at around 22 million lines across the project (including the documentation site and web UI). The core library lives in src/, the React web UI in src/app/, and the Docusaurus documentation site in site/.

Key directories tell the story of how the project grew:

| Directory | Purpose | Why It Matters |

|---|---|---|

src/providers/ |

60+ LLM integrations | Every major model accessible through one interface |

src/redteam/ |

Security testing engine | Adversarial attack generation, grading, and reporting |

src/assertions/ |

50+ grading types | From string matching to LLM-as-judge rubrics |

src/commands/ |

CLI interface | eval, view, init, redteam, cache, share, and more |

src/app/ |

React 19 + Vite + MUI | Local web UI for exploring results |

src/server/ |

Backend API | Serves the web UI and handles eval state |

src/database/ |

SQLite via Drizzle ORM | Local storage for results, cache, and config history |

examples/ |

120+ example configs | Working examples for every provider and use case |

The evaluator (src/evaluator.ts) is the engine room. It reads the config, resolves providers, expands test cases with variable substitution, runs inference calls with configurable concurrency, pipes outputs through assertions, and writes results to the database. Caching is aggressive: if you have already called GPT-4o with a specific prompt, the cached response is reused.

Testing uses Vitest with separate configs for unit, integration, and smoke tests. The CI/CD story is strong: promptfoo ships as a GitHub Action for scanning pull requests, and the src/codeScan/ module analyzes code changes for LLM-related security issues.

CI/CD: Evals as Part of Every Deploy

Promptfoo is designed to run in CI. The GitHub Action scans pull requests for prompt changes and runs evals automatically. If assertions fail, the PR gets flagged before anyone merges.

This is where the tool becomes genuinely transformative. Most teams discover prompt regressions in production. With promptfoo in CI, you catch them at the pull request stage. The eval results appear as comments on the PR, showing exactly which test cases passed or failed and on which providers.

# .github/workflows/llm-eval.yml

- uses: promptfoo/promptfoo-action@v1

with:

config: promptfooconfig.yaml

github-token: ${{ secrets.GITHUB_TOKEN }}The code scanning module goes further. It analyzes your application code for patterns that indicate LLM security risks: unvalidated user input flowing into prompts, missing output sanitization, exposed API keys, and similar issues. Think of it as ESLint for LLM security.

The Competitive Landscape

Promptfoo sits at the intersection of LLM evaluation and LLM security. No other tool occupies both spaces as effectively.

DeepEval is the closest competitor on the evaluation side. It is Python-native with pytest integration and offers 60+ metrics with self-explaining scores. Its strength is the depth of individual metrics. Promptfoo's advantage is the provider abstraction layer and the ability to compare models side-by-side without writing Python code.

RAGAS dominates RAG-specific evaluation. If your entire pipeline is retrieval-augmented generation, RAGAS gives you retrieval-specific scoring that promptfoo does not match in depth. But RAGAS does not do red teaming, CI/CD integration, or multi-provider comparison.

Braintrust offers a SaaS evaluation platform with logging and monitoring. It provides a polished cloud experience but requires sending your data to their servers. Promptfoo runs entirely locally by default.

The red teaming side has fewer direct competitors. Enterprise tools from companies like HiddenLayer and Robust Intelligence offer similar capabilities but as closed-source, expensive platforms. Promptfoo is the only open-source tool that combines evaluation and red teaming in a single framework.

| Feature | Promptfoo | DeepEval | RAGAS |

|---|---|---|---|

| Language | TypeScript (YAML config) | Python (pytest) | Python |

| Multi-provider comparison | 60+ providers, one config | Manual per-provider setup | Limited |

| Red teaming | Built-in, comprehensive | Basic safety metrics | None |

| CI/CD integration | GitHub Action + code scanning | pytest in CI | Manual |

| Privacy | Fully local by default | Local | Local |

| RAG-specific scoring | Basic | Moderate | Deep |

From Startup to OpenAI

The timeline tells a compelling story of fast execution. Ian Webster published the first commit in April 2023. By mid-2024, the project had enough traction to incorporate as a company. In July 2025, Promptfoo raised an $18.4 million Series A led by Insight Partners with participation from Andreessen Horowitz.

Then, on March 9, 2026, OpenAI announced it was acquiring Promptfoo. The terms were not disclosed. What was disclosed: the team would join OpenAI, the technology would integrate into OpenAI Frontier (their enterprise platform for AI agents), and the project would remain open source under the MIT license.

"We are acquiring Promptfoo to bring best-in-class security testing directly into the development workflow for AI agents."

The acquisition makes strategic sense from both sides. OpenAI needed enterprise-grade security tooling for its agent platform. Promptfoo had already built the trust of 25%+ of the Fortune 500. Integrating automated red teaming and vulnerability scanning into the platform where agents are built and deployed closes a critical gap.

For the open-source project, the acquisition introduces the usual questions. Will development velocity increase or stall? Will the tool remain provider-agnostic when it is owned by OpenAI? The MIT license means the community can always fork, but the project's future direction now depends on OpenAI's priorities.

What the Codebase Reveals

Several patterns in the codebase stand out as worth noting for developers studying how to build successful open-source tools.

Examples as documentation. The examples/ directory contains over 120 working configurations. There are examples for every provider, every assertion type, every integration pattern, and every red team strategy. This is not placeholder code. These are runnable configs that serve as both documentation and integration tests.

The provider registry pattern. New providers are added by implementing a standard interface and registering in src/providers/registry.ts. This plugin architecture means the community can add providers without touching core code. The result is that promptfoo supports models most people have never heard of alongside GPT-4o and Claude.

Aggressive caching. The caching layer in src/cache.ts stores LLM responses keyed by the full request payload. This is essential for a developer workflow where you re-run evals dozens of times while tweaking prompts. Without caching, each run would cost real money and real time.

The evaluator as orchestrator. The src/evaluator.ts file is the beating heart of the system. It handles concurrency limits, retries, progress reporting, result aggregation, and database writes. It coordinates all the other modules without owning any of them.

Getting Started

The onramp is deliberately simple. Three commands and you are running your first eval:

npm install -g promptfoo

promptfoo init --example getting-started

cd getting-started && promptfoo evalThe init command scaffolds a working config. The eval command runs it. The view command opens the web UI. You can also use npx promptfoo@latest without installing globally, or install via Homebrew (brew install promptfoo) or pip (pip install promptfoo).

For red teaming, the flow is similar:

promptfoo redteam init

promptfoo redteam runThe init command asks about your application and generates a red team config. The run command executes the adversarial tests and produces a vulnerability report. The entire process takes minutes, not days.

What Comes Next

The project was already shipping at a rapid pace before the acquisition. Version 0.121.2 dropped on March 12, 2026, just three days after the OpenAI announcement. The examples directory shows recent additions for agent evaluation (CrewAI, LangGraph, Pydantic AI, Google ADK, Strands), MCP tool testing, and multi-modal inputs including video and audio.

The integration into OpenAI Frontier will likely focus on three areas: automated red teaming of agents before deployment, continuous monitoring of agent behavior in production, and compliance reporting for regulated industries. These are the capabilities that enterprise customers need and that the existing open-source tool already provides in embryonic form.

Whether the open-source project thrives under OpenAI ownership or whether the community eventually forks remains to be seen. What is clear is that the problem promptfoo solves is not going away. As LLM applications get more complex, more agentic, and more embedded in critical business processes, the need for systematic testing and security scanning only grows.

Promptfoo proved that the right tool for this problem is not another platform or dashboard. It is a CLI that reads a YAML file, calls some APIs, and tells you what broke. Sometimes the best tool is the simplest one.