RuView: The WiFi Camera That Was Built by an Army of Claude Agents

One developer plus a swarm of specialized LLMs shipped a Rust edge RF perception system with 62 architecture decision records, self-supervised DensePose, and through-wall vital signs monitoring. Here is what actually happened inside the repo.

- A single developer orchestrated a swarm of specialized Claude agents that shipped a production-grade Rust WiFi sensing system with 62 formal architecture decision records.

- RuView achieves camera-free human pose estimation and vital signs monitoring by learning the static RF signature of a room and isolating human-induced disturbances from commodity ESP32 nodes.

- The project demonstrates an 810x performance improvement through a full Rust rewrite while maintaining formal DDD models and self-supervised Persistent Field modeling.

- RuView represents a provocative case study in agentic development whose technical foundations are sound but whose most ambitious claims remain under community validation.

The Swarm Inside the Repo

In June 2025 a repository appeared that looked nothing like typical open source side projects. It arrived with millions of lines of code, 62 formal Architecture Decision Records, seven Domain-Driven Design models, and an entire directory structure devoted to orchestrating its own development.

The .claude/ and .claude-flow/ folders contain what amounts to a self-contained software engineering organization. Specialized agents handle security auditing, memory management, performance optimization, PII detection, and Byzantine fault-tolerant consensus. There are agents for analysis, code review, and even sublinear optimization.

This is not decoration. The commit history shows heavy involvement from both the human maintainer and automated "claude" contributions. The project scaled to production-grade maturity at a velocity that would be impossible for a single developer working alone.

Perceive the world through signals. No cameras. No wearables. No Internet. Just physics.

What the Swarm Built: Camera-Free RF Perception

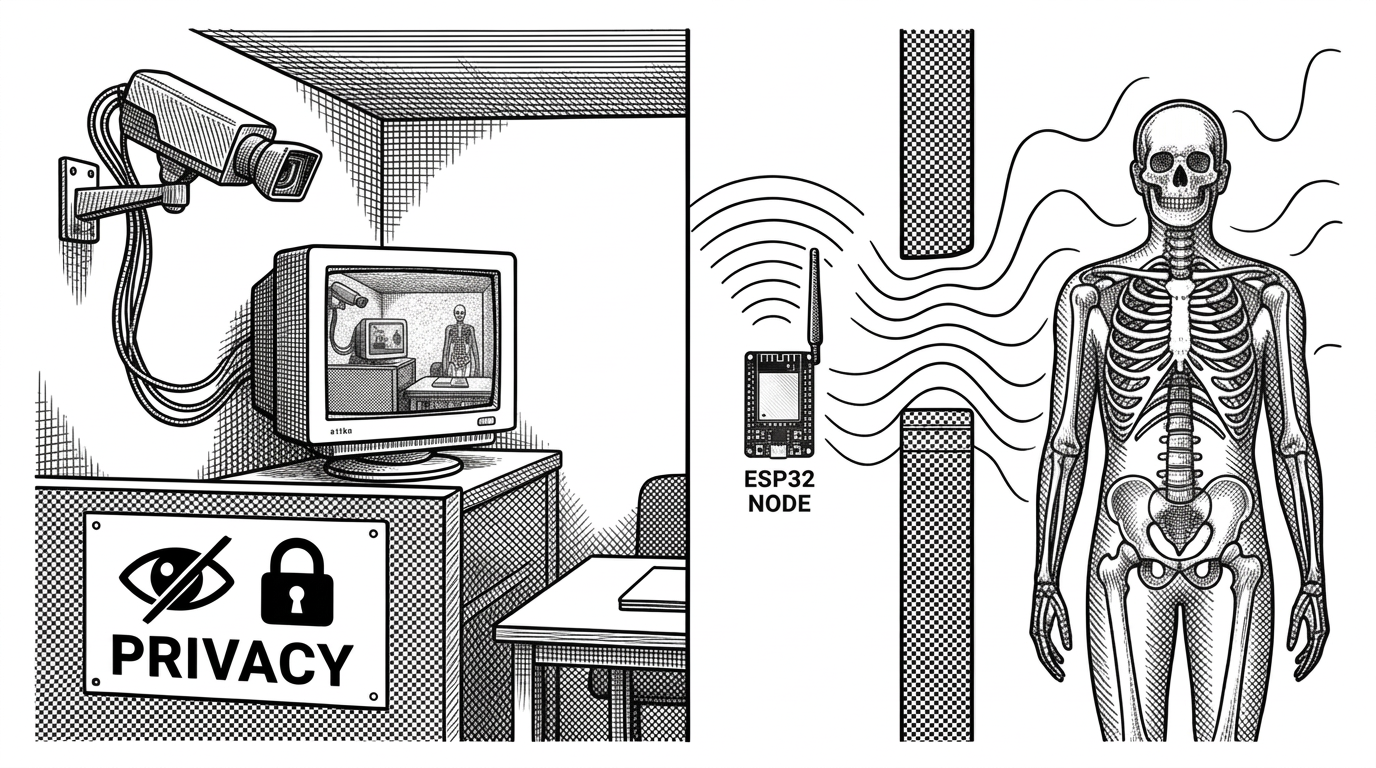

The payload is a genuine technical achievement. RuView extracts real-time human pose estimation, vital signs, presence, and through-wall sensing exclusively from WiFi Channel State Information using commodity ESP32 nodes in a multistatic mesh.

It builds directly on Carnegie Mellon University's "DensePose From WiFi" research but makes the leap to practical edge deployment. The system learns the static RF signature of a room in a self-supervised manner, subtracts it, and isolates human-induced disturbances.

The system learns in proximity to the signals it observes. It improves as it operates.

The Physics and the Pipeline

WiFi signals bounce and distort as they encounter human bodies. RuView treats multiple ESP32 nodes as a distributed multistatic radar. Four to six nodes create over a dozen signal paths, providing 360-degree coverage and helping disambiguate multiple people.

The core innovation is the Persistent Field Model. The system spends time learning the static RF signature of an empty room. Once calibrated, it subtracts this baseline from live CSI streams to reveal only the human delta. From that delta it reconstructs UV maps, skeletal poses, and extracts breathing and heart rate through FFT analysis of micro-motions.

The entire pipeline runs in Rust, delivering claimed performance of 54,000 frames per second for pose estimation. The Rust rewrite reportedly achieved an 810x speedup over the original Python implementation.

The Credibility Question

RuView experienced explosive growth, trending globally on X and accumulating tens of thousands of stars in weeks. That velocity triggered intense scrutiny.

An audit fork accused earlier versions of using simulated or hardcoded data, missing model weights, and fabricated metrics. Critics pointed to suspicious star growth patterns and the dominance of automated contributions in the commit history.

The maintainer responded directly, pointing to the academic foundations, successful runs by users with physical ESP32 hardware, and the ongoing Rust rewrite. Some community members have reported positive results with proper setup. Others still see the project as more demonstration than production system.

The truth appears to lie in the middle. The underlying physics and academic lineage are sound. Real CSI sensing from ESP32 is possible and has been demonstrated in research. Whether the full claimed scope (dense 17-keypoint pose, sub-inch accuracy, robust through-wall performance) is reproducibly achieved by the current codebase remains an active subject of community validation.

How It Compares

| Project | Language | Hardware | Core Capability | Self-Supervised | Documentation | Maturity |

|---|---|---|---|---|---|---|

| RuView | Rust (primary) | ESP32 mesh | Dense pose + vitals + through-wall | Yes (Persistent Field) | 62 ADRs, 7 DDD models | High velocity, contested claims |

| CMU DensePose From WiFi | Python | Research NICs | Dense pose from WiFi | Limited | Academic paper | Research prototype |

| esp-csi (Espressif) | C / Python | ESP32 | Presence & activity | No | Official examples | Production hardware focused |

| WiFi-3D-Fusion | Python | ESP32 or Nexmon | Motion + partial 3D pose | Partial | Standard research | Experimental |

Why This Matters

RuView is one of the clearest real-world demonstrations yet of what LLM-native large-scale development can look like. The combination of formal engineering discipline with agentic velocity produced a project that is simultaneously impressive, controversial, and provocative.

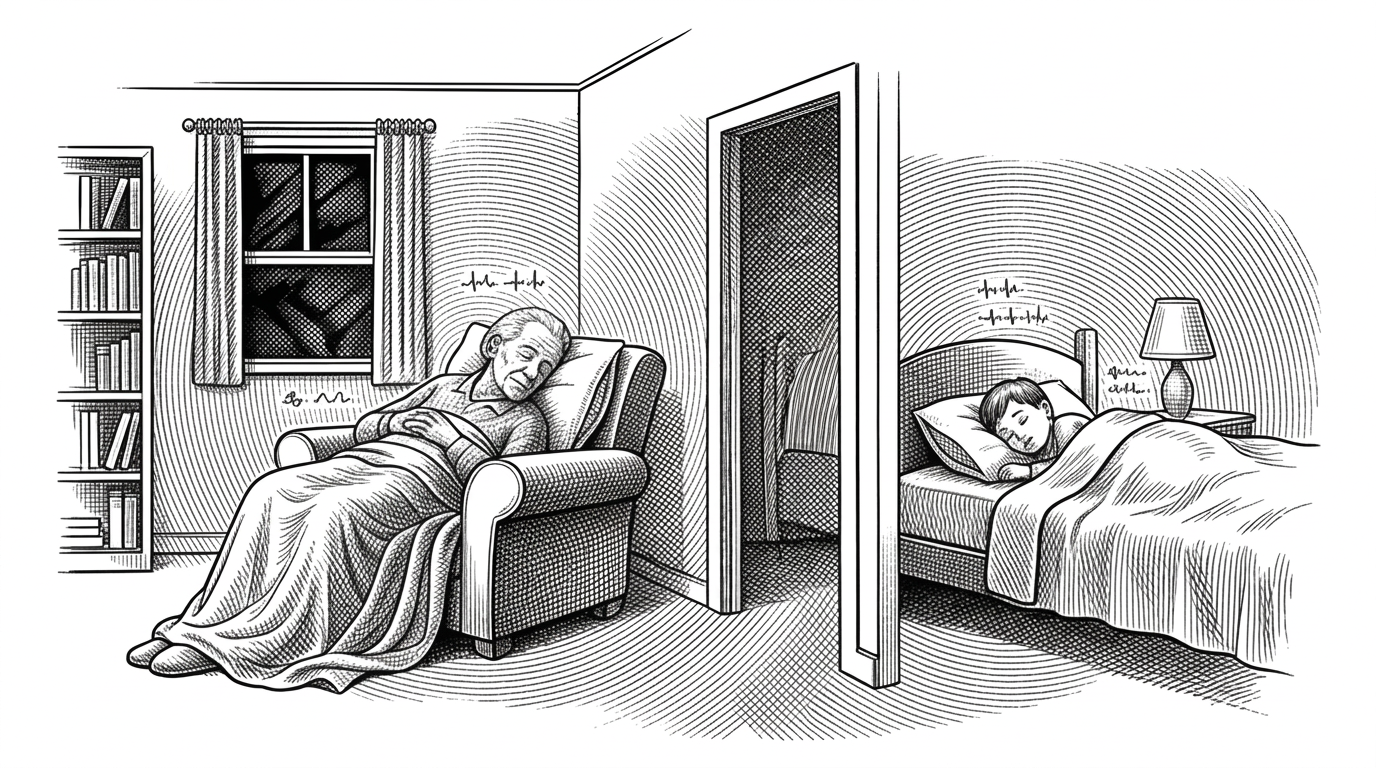

On the technical side, privacy-preserving ambient sensing has enormous potential. Elderly care, disaster response, and smart environments could benefit from perception that requires no cameras or wearables. The self-learning nature removes the need for expensive labeled datasets.

I've successfully completed a full review of the WiFi-DensePose system. The system now supports full multistatic sensing with coherence gating and persistent field modeling.

The repo forces us to confront two parallel questions. First, how far can we push RF-based perception with commodity hardware? Second, what does the future of software engineering look like when a single human can orchestrate an army of specialized agents to maintain formal architecture standards at unprecedented speed?

RuView does not yet have all the answers. But it is one of the most ambitious case studies we have.