@google/stitch-sdk: Program Design with AI Agents

Google’s TypeScript SDK turns its Gemini-powered UI generator into a first-class tool for both developers and autonomous agents via MCP and Vercel AI SDK integration.

- The Stitch SDK turns Gemini-powered UI generation into a programmable API that both humans and agents can call directly from TypeScript code.

- It provides high-level ergonomic methods for generating, editing, and creating variants of UI screens while exposing low-level MCP tools for autonomous agent workflows.

- A hybrid architecture combines a small handwritten layer with machine-generated contracts pinned by a lockfile to stay synchronized with the backend.

- Native integration with Vercel AI SDK and first-class MCP support makes Stitch the most agent-native UI generation tool available.

You Can Now Call Design Like Any Other Tool

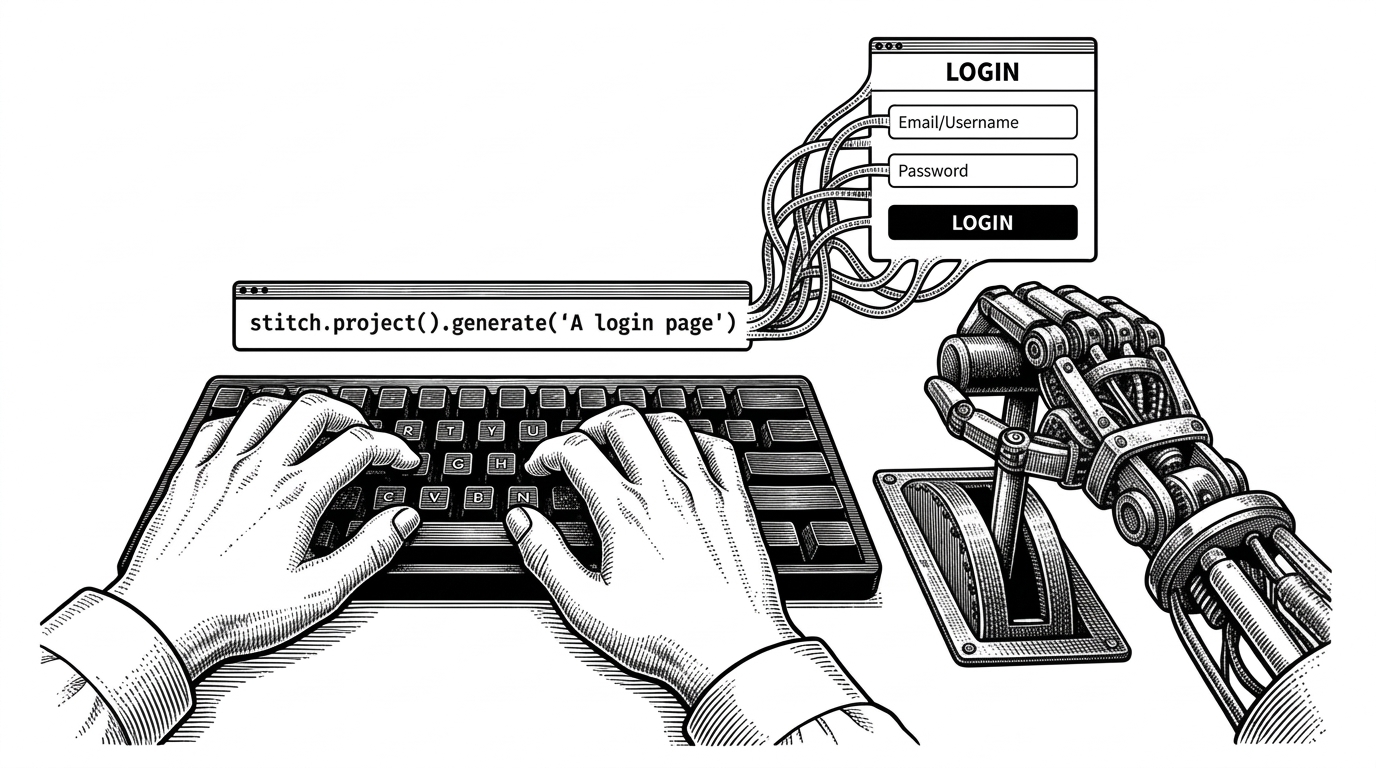

The most surprising thing about Stitch SDK is how natural it feels in code. You do not wrestle with a web interface or parse screenshots. You call methods.

import { stitch } from "@google/stitch-sdk";

const project = stitch.project("your-project-id");

const screen = await project.generate(

"A clean login page with email, password, and social sign-in"

);

const html = await screen.getHtml();

const image = await screen.getImage();Need to refine it? One more call.

const edited = await screen.edit(

"Make the background dark mode and move the logo to the top left"

);Or explore options in parallel.

const variants = await screen.variants(

"Try different color schemes",

{ variantCount: 4, creativeRange: "EXPLORE" }

);"Yes. You can program design now. I've been dreaming of shipping this for so long because it's just so much fun to use."

From Web Experiment to Agent Platform

Stitch began life in May 2025 as stitch.withgoogle.com, a Google Labs experiment that turned natural language and reference images into UI designs using Gemini. Designers and engineers collaborated on a tool that optimized both their workflows.

The SDK launched in March 2026 as the logical extension. Google did not stop at a prettier web UI. They built a production-grade TypeScript client that treats design as an API.

"We designed our SDK for both humans and their agents to drive Stitch designs. Call stitch from your code with simple methods like stitch.createProject() and screen.edit()."

Built for Two Audiences at Once

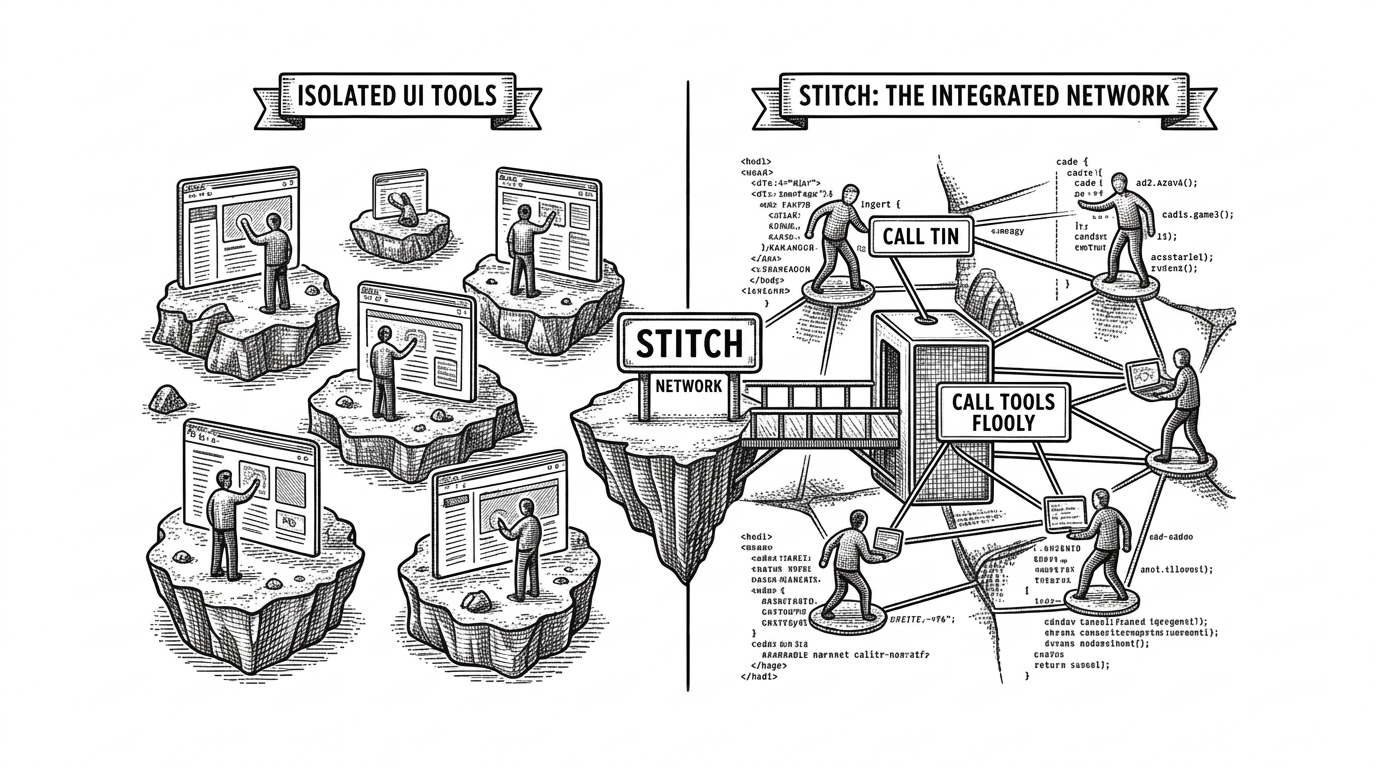

The SDK ships two distinct interfaces. High-level classes for application code. Low-level tools for agents.

For agents, drop stitchTools() directly into Vercel AI SDK.

import { stitchTools } from "@google/stitch-sdk/ai";

import { generateText } from "ai";

const { steps } = await generateText({

model: google("gemini-2.5-flash"),

tools: stitchTools(),

prompt: "Create a project and generate a modern analytics dashboard"

});The model discovers tools, decides when to call them, and continues its reasoning with the resulting HTML and images.

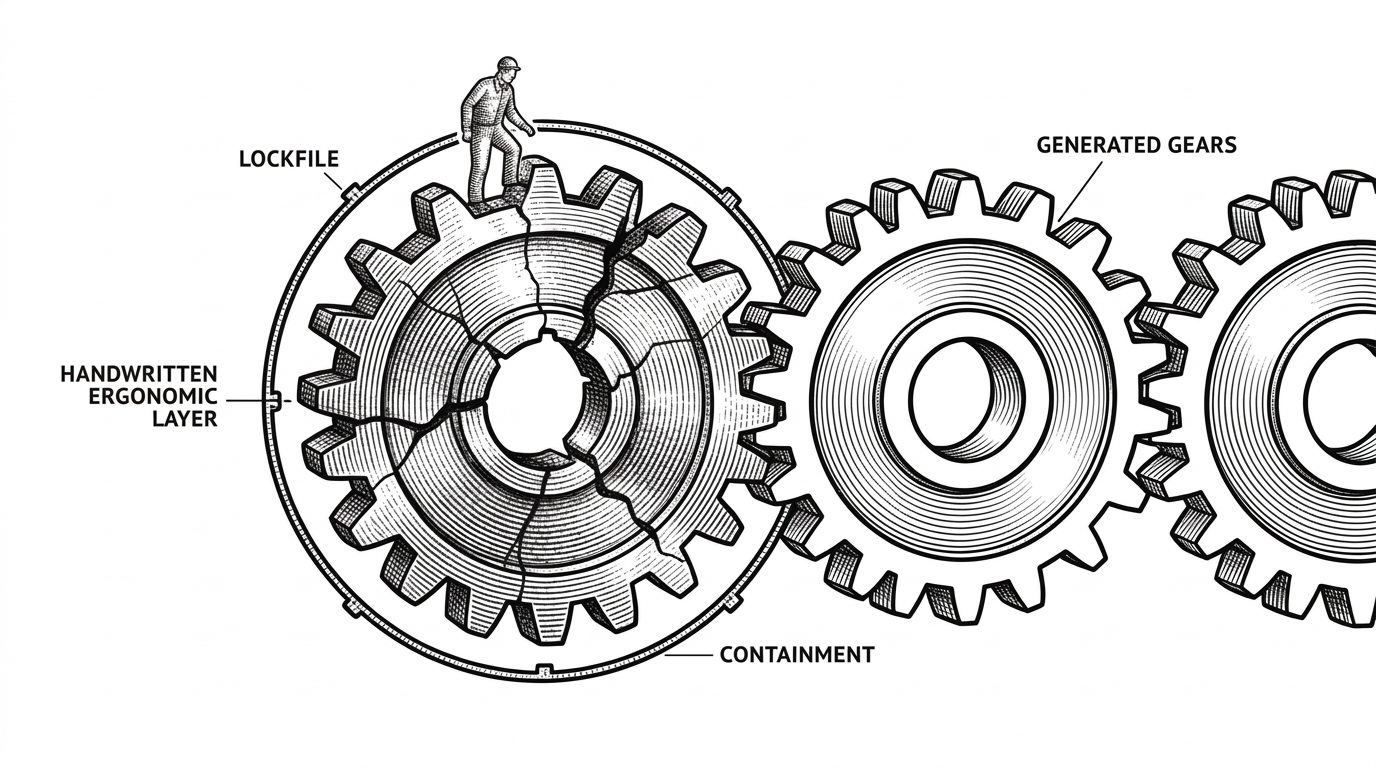

The Hybrid Architecture That Keeps It Honest

Most generated SDKs drift from their backends. Stitch solves this with a deliberate hybrid approach: a small handwritten ergonomic layer sits on top of a large machine-generated contract pulled live from the Stitch backend.

A stitch-sdk.lock file pins the generated code to a specific backend version. Scripts regenerate when the schema changes. This keeps types accurate without constant manual maintenance.

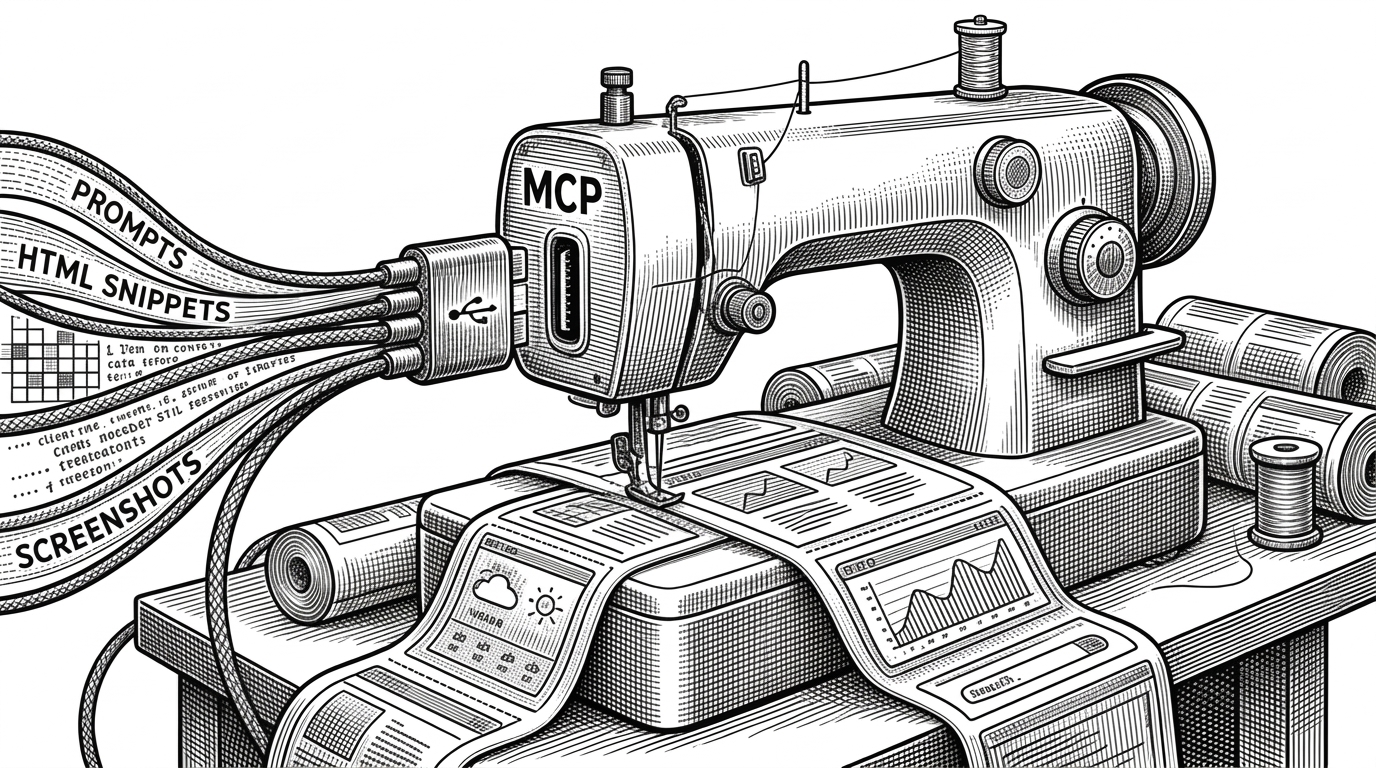

MCP Is the Secret Sauce

Model Context Protocol (MCP) provides the standardized way for agents to discover and call tools. Stitch implements it cleanly.

StitchToolClient speaks MCP directly to the backend. StitchProxy lets you expose those same tools through your own MCP server.

How It Compares

Most generative UI tools target interactive web use. Stitch SDK is built for programmable, agent-native workflows.

| Tool | Best For | Output | Agent Integration | Protocol | Backing |

|---|---|---|---|---|---|

| @google/stitch-sdk | Programmatic use & agent loops | HTML + screenshots, variants, edit | Native (Vercel AI SDK + MCP) | MCP first-class | Google Gemini |

| v0 (Vercel) | Interactive component generation | React + Tailwind | Partial | None native | Vercel |

| Lovable | Full-stack apps from prompts | Complete apps | Limited | None | Independent |

| screenshot-to-code | Vision-first conversion | Multiple frameworks | Via custom agents | None | Community |

| json-render (Vercel) | Safe structured UI | Schema-driven components | Good | None | Vercel |

Why It Matters

Design has always been the hardest part of software to automate. Stitch SDK does not replace designers. It gives both humans and agents a clean, reliable, typed interface into high-quality UI generation.

The combination of ergonomic high-level APIs, rock-solid generated contracts, and first-class MCP support makes it the most serious attempt yet at making design programmable.

For teams shipping agentic workflows, this changes the equation. Design is no longer a manual gate. It becomes another tool the agent can call with confidence.